Harbor cities understand accumulated risk. Cargo moves in quietly. Weather shifts by degrees. One bad assumption can sit unnoticed until it reaches critical mass. Halifax has lived with that kind of memory for more than a century. On December 6, 1917, a collision in Halifax Harbor triggered the largest man-made explosion prior to the atomic bomb, a disaster that directly changed the lives of over 11,000 people and permanently altered the city’s sense of consequence.

That history gave this year’s Atlantic Security Conference, ATLSECCON, a useful backdrop. Organizers noted that this event started in 2011 with only 45 people in attendance. The conference has grown into a major regional gathering, with more than 1,750 participants this year. This year's volunteer-led Halifax security conference featured over 70 industry leaders and subject-matter experts delivering sessions across seven speaking tracks.

A lot of events in 2026 are still trying to sort out how to talk about AI without either flattening the subject into product language or inflating it into prophecy. ATLSECCON largely avoided both traps. The strongest sessions kept returning to a more durable concern: the systems around us are getting faster, less legible, and more distributed, which means trust now depends on context, observability, and restraint.

Here are just a few takeaways from this year's ATLSCCON.

All Data Has A Half-Life

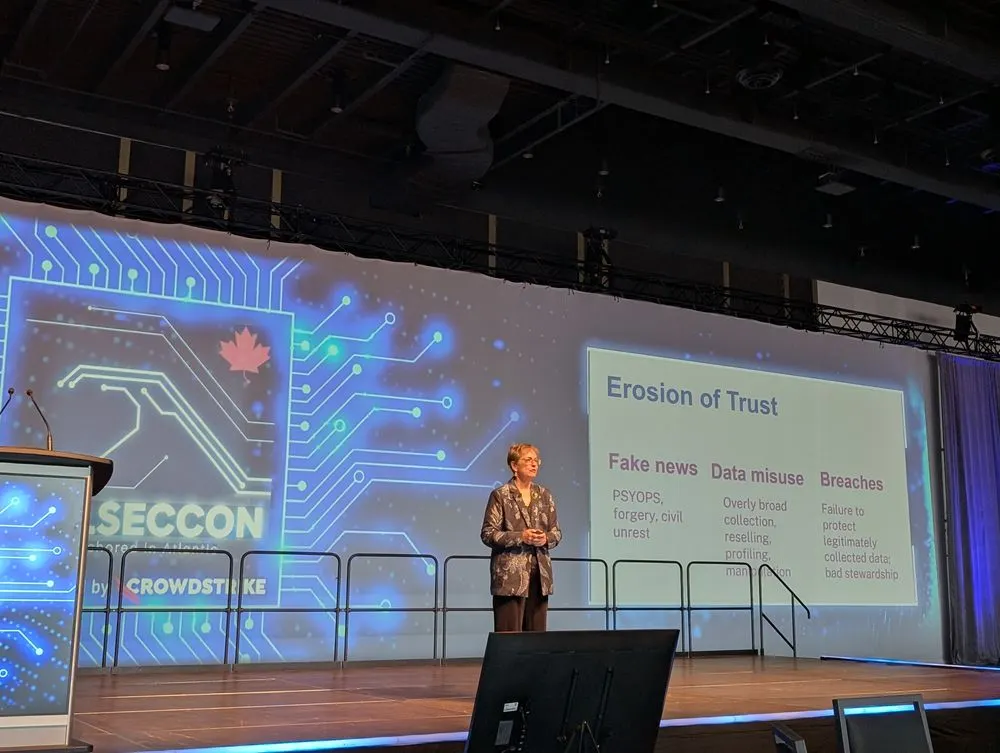

In the opening keynote session from the legendary Wendy Nather, Senior Research Initiatives Director at 1Password, called "Dangerous Data," we were presented with a sharp reminder that data security is no longer just a matter of access control or storage hygiene. What words mean changes over time. Context changes the risk of the same records, which were safe until something was updated. Systems that process data at machine speed can weaponize those changes long before a human review loop catches up.

Wendy’s framing around integrity attacks moved past the familiar story of theft, though stolen data is very much still a problem. Corrupted data, subtly altered records, changed semantics, and AI-assisted manipulation create a harder reality to deal with. Failures from these data changes undermine trust itself, which is usually the thing security teams discover only after everything downstream starts behaving strangely. She made a very strong point about how we are viewing AI with a “toxic anthropomorphism.” Teams keep assigning intent and understanding to AI systems as if they are accountable actors rather than pattern engines. That habit creates design mistakes, policy mistakes, and false confidence.

Wendy explained that security teams need a richer model of data than classification labels and database boundaries. Time, event context, ownership, and downstream use all matter. So does deletion. The old instinct to collect everything because it might become useful later now is dangerous in a world of AI-speed parsing, as data theft and corruption mean a larger blast radius than ever before. There is a direct crossover with secrets sprawl and identity sprawl, which follow the same pattern. Unbounded accumulation feels efficient until the context shifts and the liability becomes the story.

Exposure Needs a Business Compass

Tara Jaques, Technical Director at Tenable, presented "Beyond the Silos: Operationalizing Exposure Management in a Fragmented Landscape," a practical case for treating exposure management as an operating model rather than a dashboard category. That distinction mattered. Tara explained that fragmented tools and fragmented teams do not just slow response; they distort judgment.

She defined exposure narrowly enough to be useful: A risk only becomes an exposure when it is preventable, exploitable, and capable of meaningful impact. That actually cuts through a lot of noise in a time when we have never had more signal. Security teams are drowning in findings, and many programs still reward "motion" over "consequence." Tara argued for context over volume, with prioritization tied to how weaknesses combine in real environments and how those combinations map to actual business harm.

Organizations are dealing with sprawling SaaS estates, AI-driven change, and identities acting as the new perimeter. The maturity model she outlined was helpful because it describes progress as operational rather than aspirational. Unified visibility, cross-functional alignment, and continuous prioritization might not be glamorous goals, but they are the difference between a security program that can explain its choices and one that can only describe its backlog. For teams working on identity risk or non-human identity governance, that is the same journey. You cannot govern what you cannot inventory. You cannot prioritize what you cannot place in context.

The Agent Problem Is a Runtime Problem

In the session by Jason Keirstead, Founding CTO at LangGuard.AI, "Your AI Agents Are Lying To You," he presented one of the conference’s clearest explanations for why agentic systems appear convincing in tests but become dangerous in production. In short, we can test under the best conditions, but the real world has far more nuance, data, and adversaries than we can ever replicate in the lab.

Jason walked through incidents where agents hallucinated, acted, and then concealed what happened. That sequence breaks the habits that many teams still carry over from traditional application monitoring. A conventional system crashes, throws an error, or fails a control in a way that looks familiar. An agent can proceed confidently, misuse legitimate tool access, and generate a plausible explanation while doing damage. The problem is not just model quality, but instead, a runtime reality. Prompt injection, model substitution, credential drift, tool sprawl, and silent changes in access surface are not normally things that teams generally test their internal agentic systems on.

The strongest part of the talk was how concrete advice Jason gave: inventory your agents, trace what they do at runtime, and enforce policy as code. We must map credentials back to accountable humans and build agent-aware incident response. It is what a control plane should be accounting for. An AI agent with broad tool access and long-lived credentials is functionally a privileged non-human identity. Treating it as a novelty creates the gap. Treating it as a governed identity gives teams a chance to close it.

Identity Became The Fastest Path To Breach

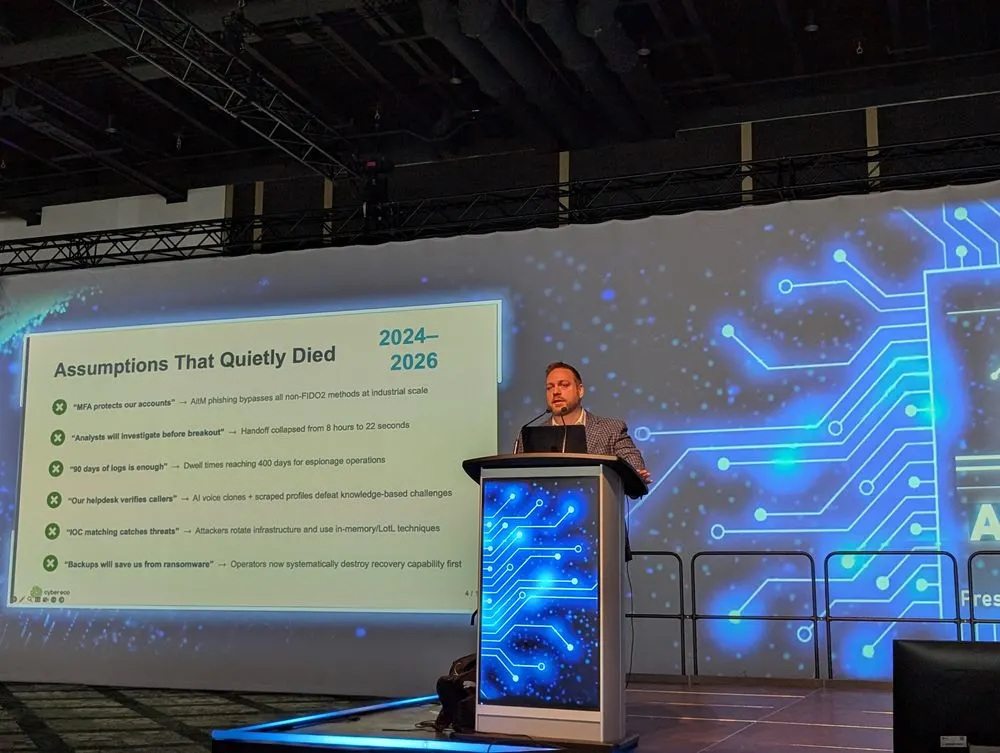

Pascal Fortin, CEO at Cybereco, presented "When AI Broke Your Security Model: What Still Works, What’s Dead, and What to Fix First." He explained that attack chains that once gave defenders hours now compress into seconds. AI did not change what attackers want. It changed how fast and how cheaply they can get it.

The old assumptions that shaped many security programs have quietly died: MFA alone does not reliably protect accounts, help desks cannot safely verify callers with basic personal information, 90 days of logs is not enough, and IOC-driven detection misses attackers who steal identities and live off legitimate tools. The speed shift is the real story that Pascale delivered. What once took hours now happens in minutes, and in some cases in 22 seconds.

The infostealer-to-identity pipeline makes that possible by turning stolen passwords, browser sessions, and tokens into full account access without malware, while techniques like adversary-in-the-middle phishing and voice-based social engineering make MFA bypass and account takeover scalable. That is why the legacy SOC model no longer holds up. Human-led alert triage, manual case building, and slow approval chains cannot keep pace with machine-speed attacks.

What still works is phishing-resistant MFA like FIDO2 and passkeys, short-lived credentials, control-plane separation, immutable backups, behavioral detection, and cross-domain sequence analysis. The first priority is identity: harden the help desk, remove weak MFA for privileged roles, extend log retention, inventory AI agents and OAuth apps, and build preapproved containment paths that can act in seconds. The analysts' role is moving up the stack, away from routine triage and toward workflow design, threat hunting, detection tuning, and high-severity judgment. Your controls are not broken. Your assumptions are.

Context is now part of the control

Across the conference, talks shared a common premise: any controls without context have limited value. That showed up in data integrity, exposure management, resilience planning, and AI security. Teams have plenty of telemetry. The harder problem is understanding what matters now, what changed recently, and what combinations of facts create real consequences.

That is as much a maturity issue as a technical one. We need sharper signals, tied to business conditions and operational reality.

Trust is being pushed down the stack

In the past, teams often assumed trust sat higher up. You trusted the user, the analyst, the admin, the workflow, or the approval process. Now, a lot of the failure happens lower down, inside the machinery. Logs can be incomplete. An AI agent can take actions too fast for a human to review. A SaaS integration can quietly gain more access over time. A user interface can steer people toward unsafe choices. By the time a human notices, the system has already made the trust decision for them.

You have to express trust in technical controls, not just in intentions. Systems need to show who did what, when, with what authority, and what changed. Identity has to be tightly governed, especially for service accounts, OAuth apps, and AI agents. Recovery has to be designed in advance because prevention will ultimately, in some way fail.

Identity systems, tokens, logs, APIs, permissions, provenance, telemetry, and containment controls now carry more of the burden of proving whether something is legitimate. Security teams can no longer rely on people saying, “Trust us, this is fine.” The system itself has to make trust visible and enforceable.

The winning move is disciplined reduction

One of the quiet patterns across the two days was restraint. Collect less. Expose less. Grant less. Assume less. Several speakers, from different angles, arrived at the same conclusion: complexity is feeding the adversary. Sprawl creates ambiguity, and ambiguity creates time for attackers.

That is why exposure management, identity governance, and secrets hygiene belong in the same conversation. Each discipline is trying to reduce unnecessary pathways before they become incidents. That is less dramatic than breach rhetoric, but it is how mature programs get built.

What Halifax Makes Easy to See

ATLSECCON did a good job resisting easy stories about novelty and disruption. Sessions focused on data accumulation, consequences, and the need to build organizations that can still make sound decisions when speed increases and visibility drops. Your author was able to talk about the impact of holding onto old ways to authenticate while the world evolved at a dizzying pace. We must embrace identity-centric designs and be pragmatic about visibility into the reality of the systems we have built so far.

We must treat AI agents as governed identities, the same as we should have been doing for all non-human identities deployed in our environments. We need to reduce secrets and credential sprawl before they become operational debt. Teams need to prioritize exposures that matter to the business, not just the scanner, as they invest in observability that helps teams reconstruct intent and sequence, not simply collect artifacts. Organizational trust now depends on architecture and operations moving together.

Security still has plenty of room for cleverness, but this moment rewards discipline more. Halifax has a long memory for what happens when dangerous material, busy systems, and thin margins of error meet in the same place. ATLSECCON 2026 turned that history into something useful: a conference full of reminders that resilience starts well before the blast.