San Francisco has always had a talent for turning risk into infrastructure, such as when Charles Fey invented the slot machine there during the Gold Rush. Today, we have another nondeterministic device for fortune seekers willing to pull a lever and see what comes back. We call it AI.

BSides San Francisco 2026 felt built for a more modern version of that wager. The stakes today are identities, tokens, agents, permissions, and the growing gap between what systems are supposed to do and what they actually do in production.

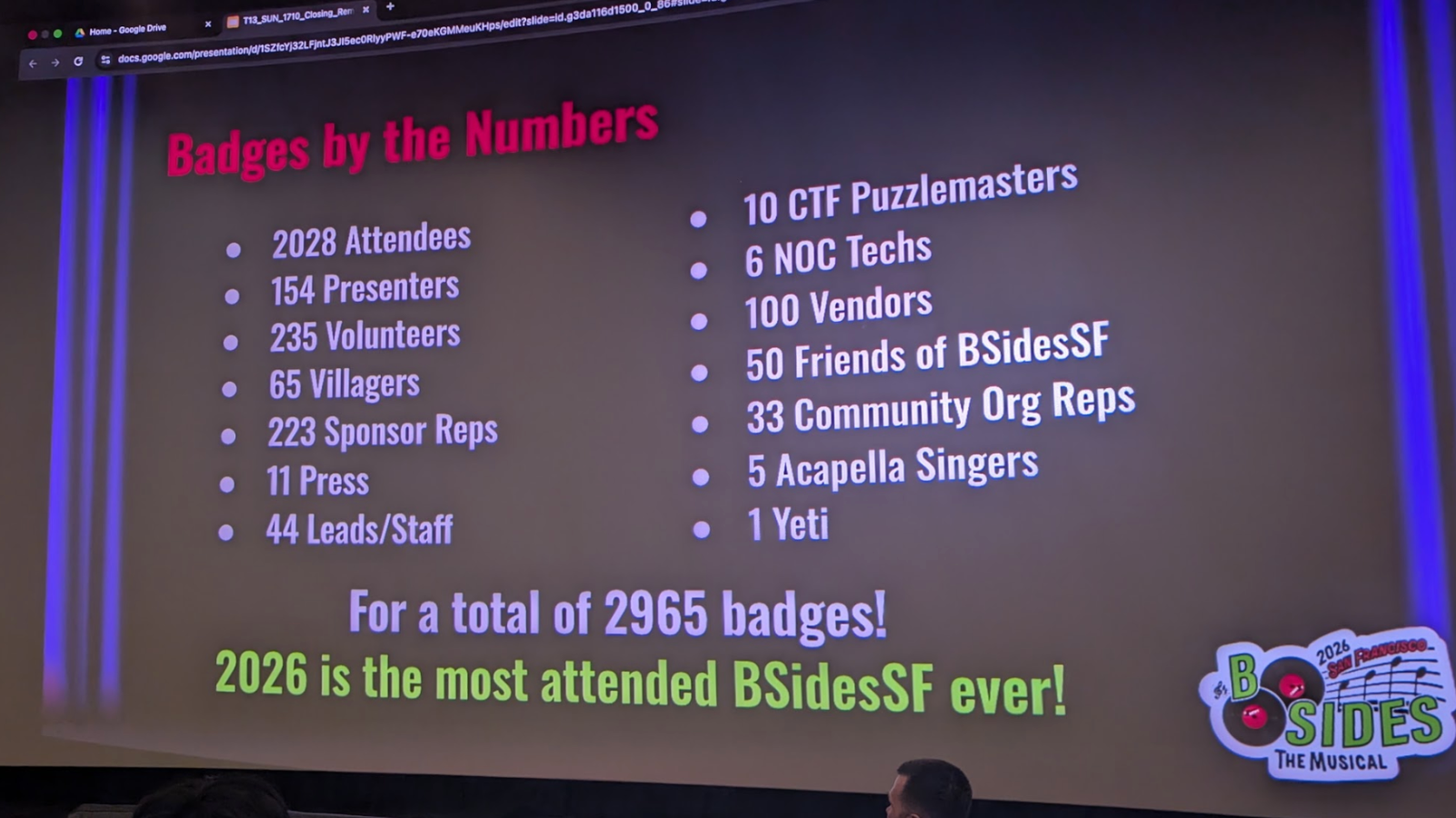

Happening the weekend before RSA Conference, this is one of the largest of the BSides events globally. This year, 2,965 participants attended 92 talks, 8 workshops, 11 interactive sessions, a CTF, and many, many other activities. This year's event was made possible by the help of 235 volunteers and a truly tireless organizing team.

People were there to share notes on how to keep control when software delivery speeds up, AI changes how code and infrastructure are produced, and attackers are increasingly happy to work through identity and trust instead of smashing through a perimeter. Here are just a few highlights from this year's edition of BSidesSF.

Time Travel Without Nostalgia

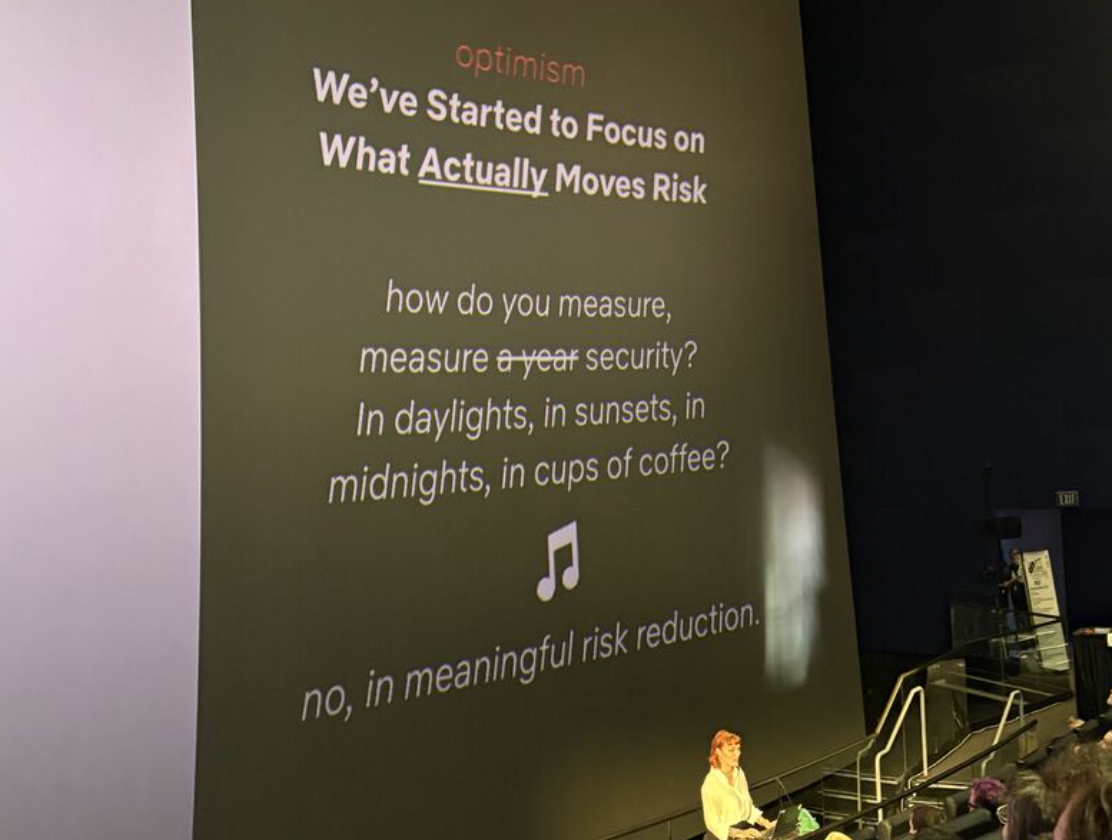

In the session from Anna Westelius, Head of Security, Privacy & Assurance at Netflix, "Let's Do the Timewarp Again! A Look Back to Move Forward," she presented security history as a series of pivots rather than a straight line. Instead of treating today’s instability as unprecedented, she walked through earlier shifts in the early internet, the worm era, and the move to the cloud, showing how each period first felt chaotic, then gradually produced better defaults, stronger habits, and more durable systems.

Anna made the case that security has repeatedly moved from heroics to engineering. Cloud, once framed as inherently unsafe, matured into a place where private-by-default storage, identity-centric controls, and better primitives could outperform old on-premises assumptions when teams rebuilt for the environment instead of dragging old workflows into it. Fire drills now are new CVEs, not being compromised.

She reminded us that progress comes from community and deliberately laying out well-paved roads that are easier to travel. Anna argued that the field is finally in a position to measure meaningful risk reduction, design for humans instead of blaming them, and start tackling legacy risk that used to feel too sprawling to touch. Maturity is not perfection. It is building enough scaffolding that the next crisis does not have to be improvised.

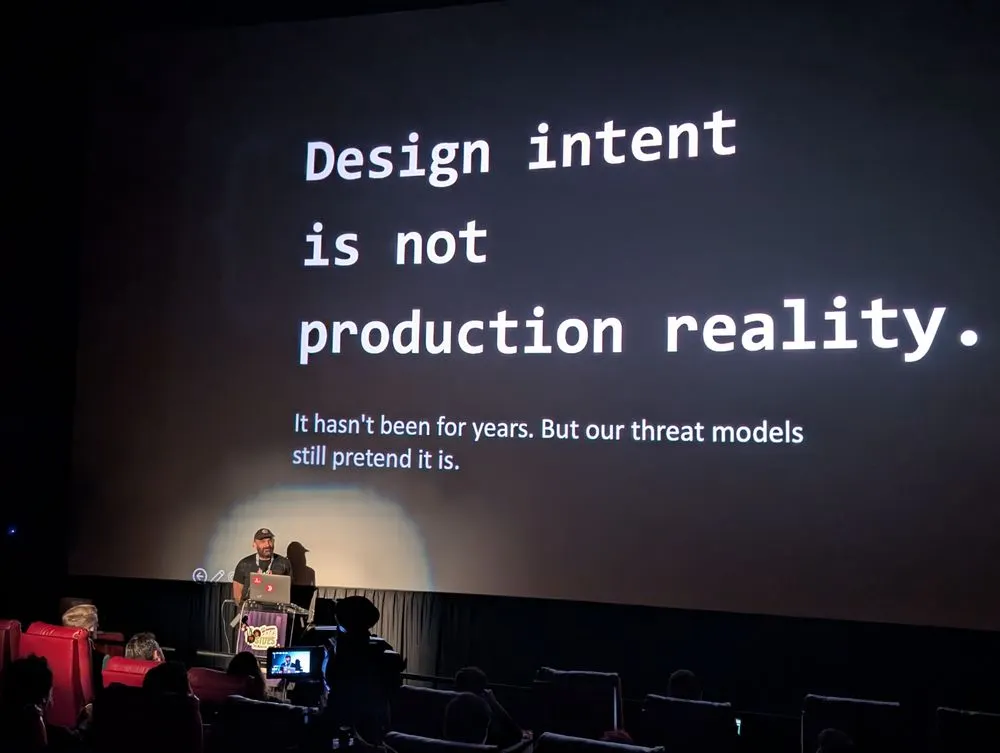

The Threat Model Meets Production

Farshad Abasi, CoFounder of Eureka DevSecOps and CSO of Forward Security, gave a session called "Your Threat Model Is Lying to You: Why Modeling the Design Isn’t Enough in 2026," where he laid out a sharp critique of threat modeling, at least the way many teams still practice it. The problem was not the exercise itself, but that design intent keeps getting treated as reality, even when production systems drift, dependencies multiply, and delivery speed outruns any annual review cycle.

Farshad explained that threat models often describe what teams think they built, while the real system includes transitive dependencies, infrastructure changes, deployment quirks, and configuration choices that never made it into the diagram. He pointed to the need for a feedback loop between the model and the evidence teams already collect from SAST, SCA, DAST, cloud findings, and deployment telemetry. A finding should not just confirm a known risk. It should be able to expose a broken assumption and force the model to update.

That shift has real consequences, as it moves threat modeling out of a compliance drawer and back into engineering rituals like backlog refinement, pull requests, and post-finding analysis. The problem is rarely the first known issue. It is the invisible dependency, the quietly expanded permission, or the workflow that changed faster than the security model.

Tokens Are the New Currency

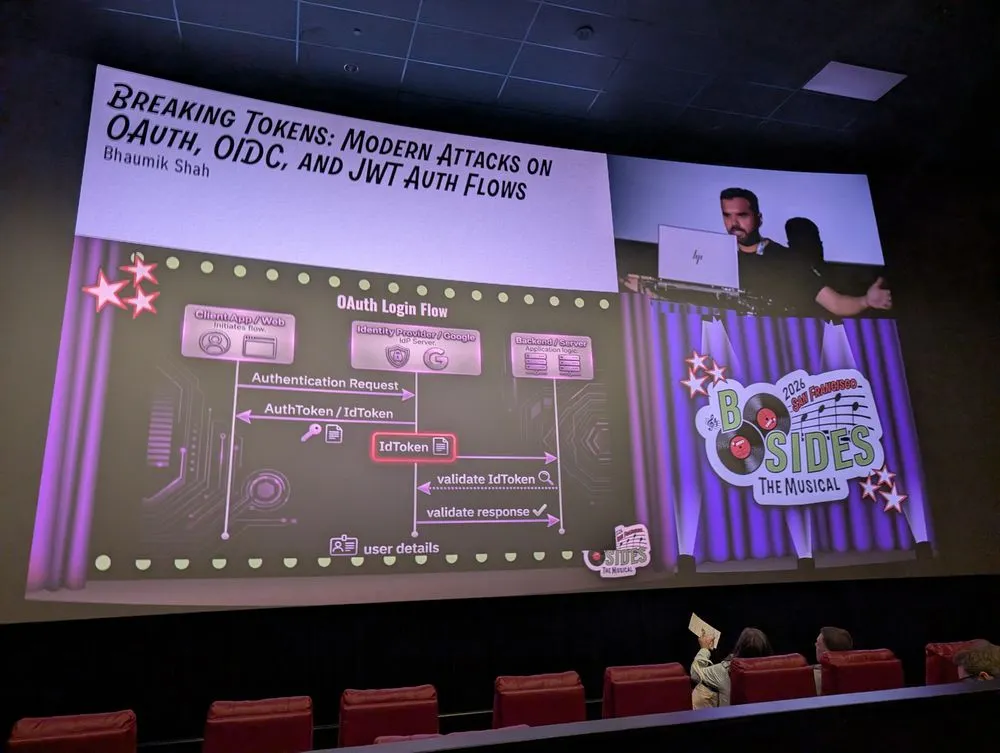

In "Breaking Tokens: Modern Attacks on OAuth, OIDC, and JWT Auth Flows," Bhaumik Shah, CEO at SecurifyAI, presented identity failure as an application architecture problem, not just an authentication problem. He covered examples of token replay, weak audience validation, trust confusion between identity providers, and the dangerous habit of treating a valid token as universally trustworthy.

He quickly moved from protocol language to operational consequences in this session, sharing that a token validated in one place could be replayed somewhere else. An app without proper validation might accept a token from the wrong issuer, or a federated environment could end up granting the same privileges to identities that arrived through very different trust paths. In practice, that means an organization can enforce MFA at login and still leave the actual session material portable enough to be abused elsewhere.

Bhaumik's mitigation advice was crisp and overdue. We should bind privileges to high-trust identity providers and validate the issuer and subject together instead of trusting email alone. We also need to narrow the scopes so a stolen token does not become a skeleton key. He talked about the fact that identity is no longer just about proving who logged in. It is about preserving trust boundaries after authentication, when tokens start moving between proxies, services, and automation.

Hunting the Blind Spot on Developer Workstations

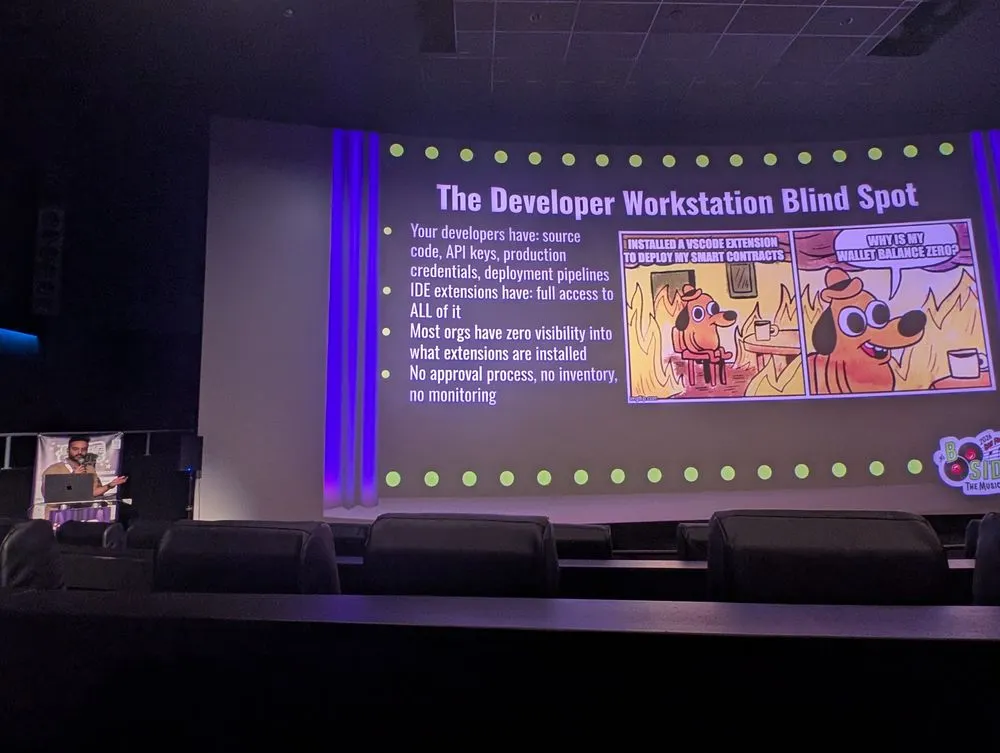

Vinod Tiwari, Engineer at PIP Labs and first-time speaker, presented "Hunting Malicious IDE Extensions: Building Detection at Scale Across Developer Workstations." He walked us through a problem most security teams still barely measure: the IDE extension layer on developer machines has broad access to many dangerous things. Beyond source code, most dev machines have API keys, cloud credentials, deployment tooling, and local secrets, yet in many organizations, nobody has a complete inventory of what is installed. Vinod said that approvals are rare, monitoring is minimal, and only a couple of people in the room raised their hands when asked if they had MDM visibility into extensions at all. That gap matters because extensions sit inside one of the richest trust zones in the company.

Vinod pointed to multiple cases from 2023 through 2025 in which malicious VS Code extensions were caught stealing SSH keys, typosquatted packages were bundled as IDE extensions to target crypto developers, and even widely installed extensions were found to exhibit data exfiltration behavior. With tens of thousands of extensions in the VS Code marketplace and a similar scale in JetBrains ecosystems, the review model has not kept pace with the level of access these plugins receive. He said there is often no sandbox here, and extensions can read and write local files, spawn processes, access the network, and, in some cases, quietly access clipboard data or other sensitive workflows.

Vinod highlighted how private keys in `.env` files, wallet seed phrases, and deployment credentials can all sit on developer workstations, turning a compromised extension into a direct path to irreversible damage. One malicious plugin is not just a workstation incident. It can become a wallet loss, a production compromise, or a supply chain exposure. Teams need to stop treating IDE extensions as harmless productivity add-ons and start treating them as privileged code execution inside the developer environment.

Static security assumptions are failing faster

Attackers are adapting, and our models of stability are aging out. Threat models go stale. Token assumptions do not survive microservices. Audit habits lag behind AI-assisted development. A control that made sense when releases were slower now becomes a blind spot because the underlying system changes too quickly.

That is a meaningful shift for defensive work. It suggests that many security programs do not need more categories of findings as much as they need faster ways to reconcile expectations with reality. Drift, replay, hidden dependencies, and agent behavior all punish teams that treat security as a periodic review instead of continuous correction.

Identity has moved into the center of the map

The strongest sessions throughout the event kept orbiting identity, even when they were not labeled that way. Okta hunting, OAuth token replay, authorization architecture, and AI agents with production access all pointed to the same practical truth: trust now travels through sessions, tokens, permissions, and service relationships more often than through a clean user login moment.

Identity risk is now inseparable from operational design. Secrets sprawl, overbroad scopes, orphaned permissions, and weak service boundaries all create the same outcome. They let valid-looking access travel farther than it should. In that environment, good hygiene is no longer a side practice. It is the structure that keeps the blast radius from becoming a business risk.

Security teams are being pushed closer to platform work

Another conversation at the event was that the old separation between security, platform, and developer tooling is getting harder to sustain. Talks on authorization, malicious IDE extensions, AppSec, and AI agents all described a world where the useful control point is often the workflow itself. The winning pattern was not “scan more.” It was “build the road correctly.”

That has implications for staffing and program design. Teams need people who can express policy in systems, not only people who can identify issues after the fact. Secure defaults, tool proxies, sidecars, telemetry feedback loops, and opinionated guardrails all came up because they let security become part of how work is done, instead of an extra approval step hovering outside it.

What San Francisco Made Feel Obvious

BSidesSF is a very forward-looking conference, in part because the event is made up of the practitioners, maintainers, and professionals who are actively working to keep us all safe. What seemed to be the consensus in the hallways was that the problems we face can't be solved with just more tools. They are going to be solved organizational change in how we deal with trust and access.

If the threat model no longer matches production or a dependency incident exposes confusion about ownership, it is not just time to patch, it is time to reconsider if your architecture and governance are aligned with your org's goals. This event left me with hope that we can make security better by focusing on the systems that issue trust, store secrets, define permissions, and drive automation. Reduce what can sprawl as you update what has drifted. We don't need to stretch our old security plans around new technology; we need to adopt better paved paths and guardrails for a safer future. Especially as it is ever increasingly driven by AI.