One theory about the Gateway Arch is that it is a giant staple connecting the Midwest to the Great Plains. Bridging the Mississippi River, it does really connect East to West in the US. It is also home to a vibrant tech community that is working to connect technology and business goals. This community got together to discuss application development, DevOps best practices, and how to stay safe while delivering awesome features and experiences at the St. Charles Convention Center for dev up 2023.

Over 75 speakers gave talks on a wide range of subjects across more than ten simultaneous tracks. Topics included development language-focused talks such as "C# Past, Present, and Beyond" from Jim Wooley, DevOps best practices including "ARM, Bicep, knees and toes! Infrastructure as code for beginners" from Samuel Gomez, and even career advice talks like From Curiosity to Career: Becoming an Ethical Hacker from Jason Gillam.

While covering every session or lesson learned at dev up 2023 would be impossible, here are some highlights from the event.

Azure and API Security

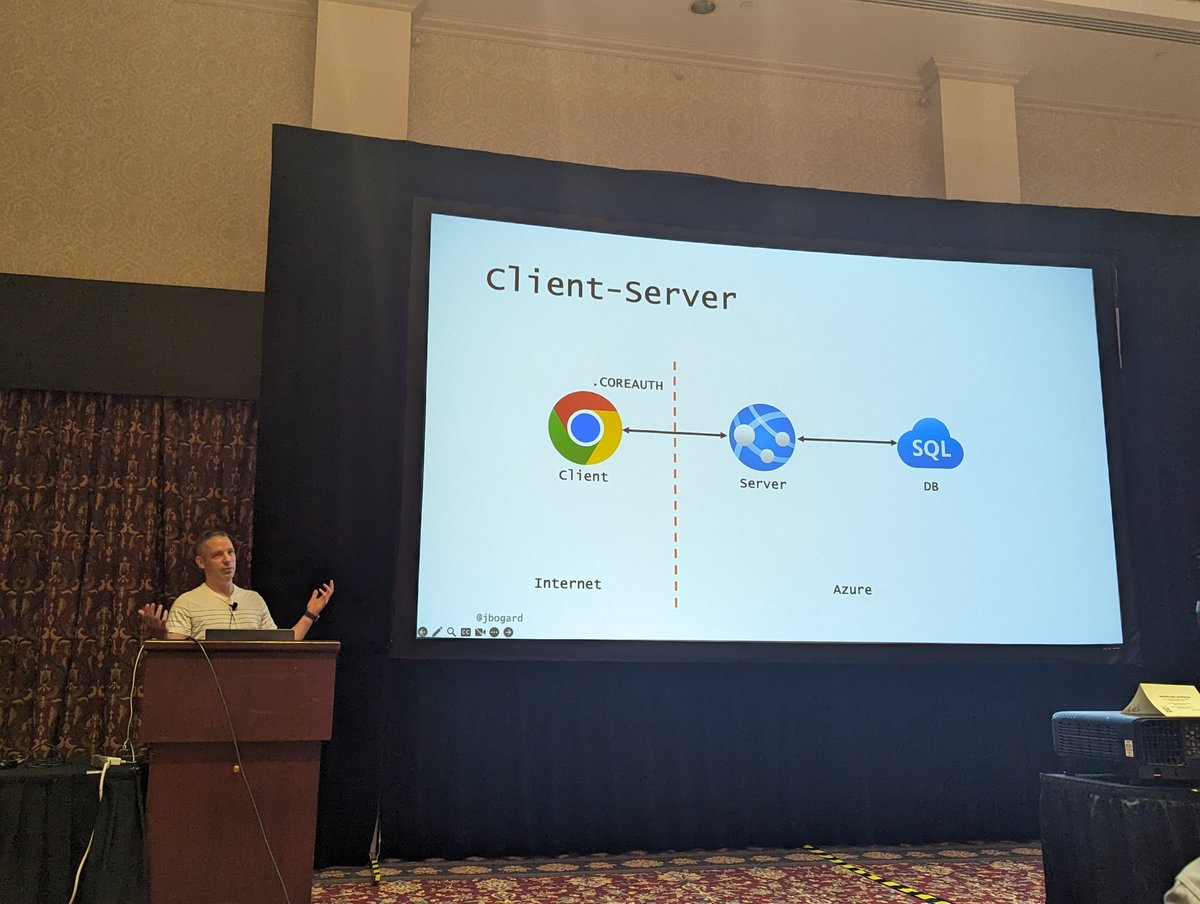

In his session "Demystifying Web API Security in Azure," Jimmy Bogard started by laying out the short, simple history of how we used to handle authentication when all you needed to worry about was a user connecting to a single web app, namely using cookies. But as we started adding microservices, we suddenly had to start ensuring that apps and services had the right permissions, too. We started implementing Backend-For-Frontends patterns and communicating via APIs.

The rise of Zero Trust architecture means we are now in a world of 'verify, then temporarily trust.' While Zero Trust is a great philosophy, it stops short of giving specifications and guidelines, like OAuth lays out. Fortunately for Azure users, there is a clear path leveraging Azure Active Directory.

He referred us to the document on the scenario where a daemon application calls web APIs. In this setup, the application requests an access token using its application identity to Azure AD. From there, the application can be issued a role and specific permissions. This allows the developers to define very tightly scoped custom roles and sets of permissions, all without needing to set a password or hardcode any API keys throughout the application. Jimmy has released an example application that shows off this approach.

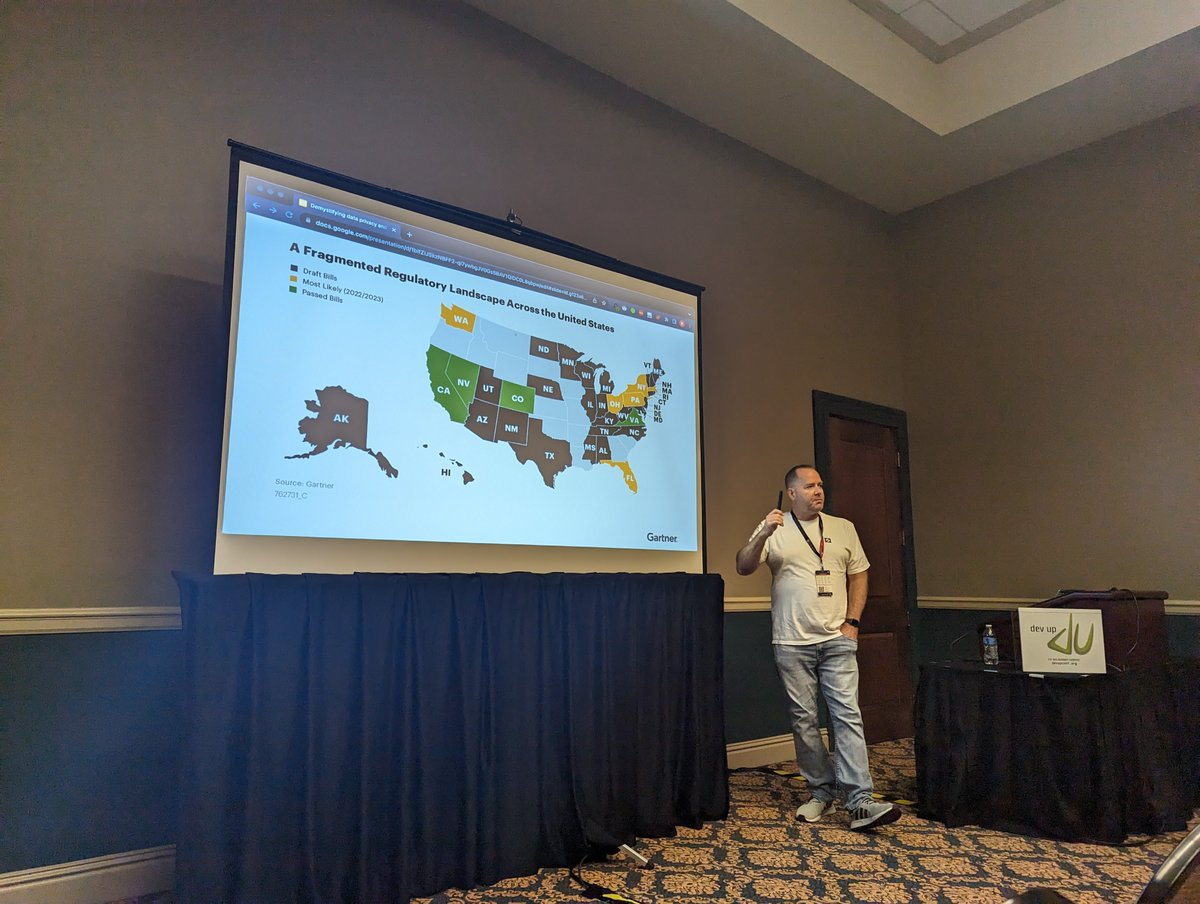

Understanding data privacy is the local law

Ryan Overton, Developer Advocate at Ketch, took the attendees through the responsibilities and liabilities of organizations who collect and use customer data in his talk "Demystifying data privacy and your responsibilities." He began by explaining that data privacy is not the same thing as data security. Data privacy is what laws and regulations say about how we access and use customer data. Data security is how organizations prevent unauthorized access to that data. Both are important, but getting data privacy wrong could bring hefty fines.

He defined specific terms used throughout the legislation and by lawyers when discussing privacy:

- Data Purpose - The reason the company is requesting the data and what they plan to do with it.

- Consent - What the user says you can do with their data.

- Regulation - The specific laws applied to customer data in a jurisdiction.

- Jurisdiction - The geographic region where a data privacy regulation applies.

- Legal basis - The regulatory justification for using data. Basically, how lawyers say, "We are allowed to use X data for Y purpose, according to law Z.

- Data subject rights, DSR - Rights afforded to individuals under the laws.

He said the best thing to do when in doubt is to bring in legal counsel early and have them oversee and explain any potential privacy concerns early in the process. This is the easiest and cheapest option when a potential issue is on the table.

He said in general, you can almost always assume that the user's current location will inform you of which regulations will apply. For example, someone from France or Germany visiting your site will be covered by GDPR, and you should afford them all those rights.

One of the largest issues we face as an industry right now is tracking visitors without relying on cookies. Cookies have been our standardized tool for years. However, in browsers growing in popularity, like Brave, and with recent updates to Safari, cookies are set to off by default. Ultimately, we need to find better ways to honor a user's choices and let users easily opt in or opt out of sharing their data while still giving them world-class online experiences.

Thinking in the number of nines

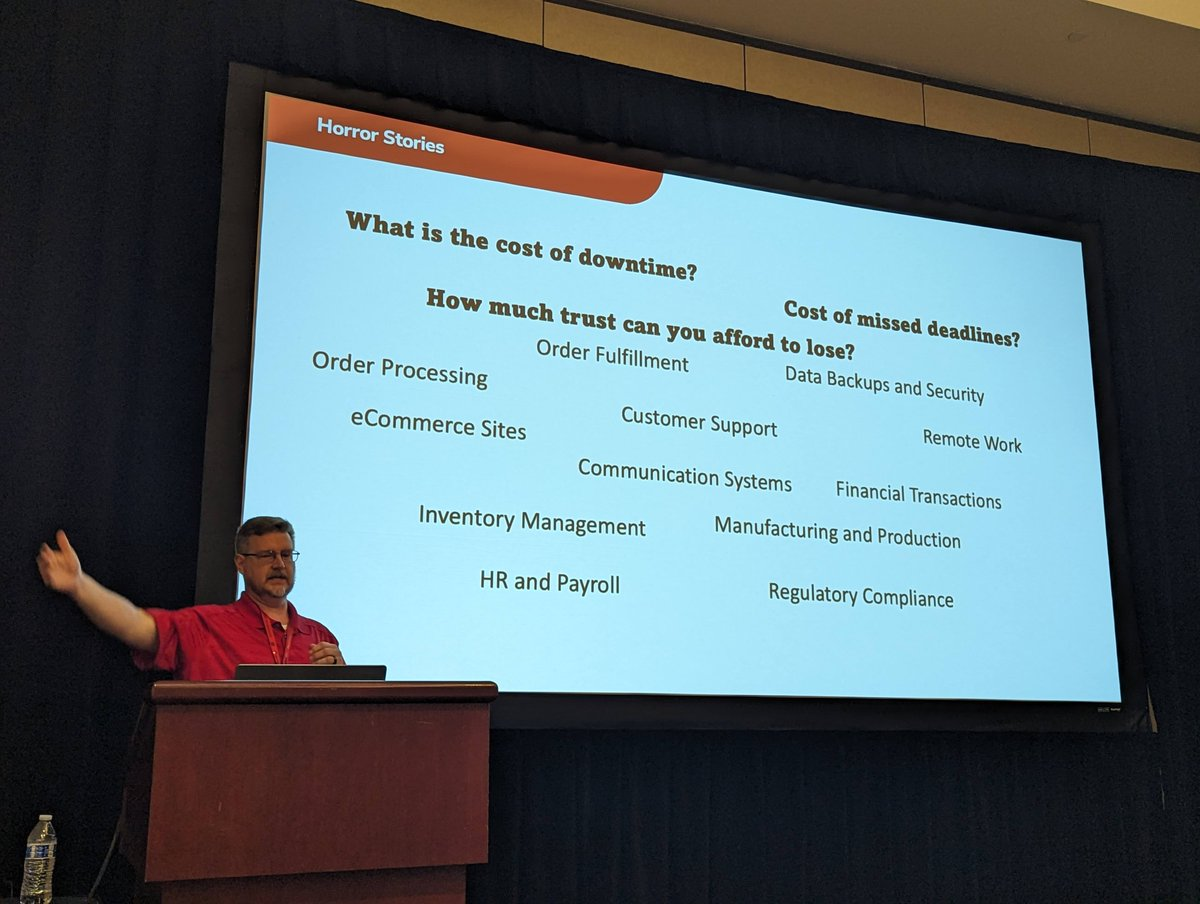

When you think about downtime, how much is acceptable? This is the core question that Sean Whitesell, Microsoft MVP and Senior Cloud Architect at ArchitectNow, asked in his talk "Saving Your Ass(ets) - Azure Resiliency Planning." Beyond just the cost of that service that is down, we need to ask ourselves about the cost of missed deadlines, the loss of focus as the team scrambles to deal with the situation, and how much customer satisfaction and trust you can afford to lose.

He defined a disaster as "a condition in which a system or business processes is either performing poorly or not at all available due to an event." This could mean a total outage across the system or a single microservice taking exponentially longer to run than acceptable. We need to build resiliency into our systems, which he and Azure define as "the ability of a system to gracefully handle and recover from failures, both inadvertent and malicious."

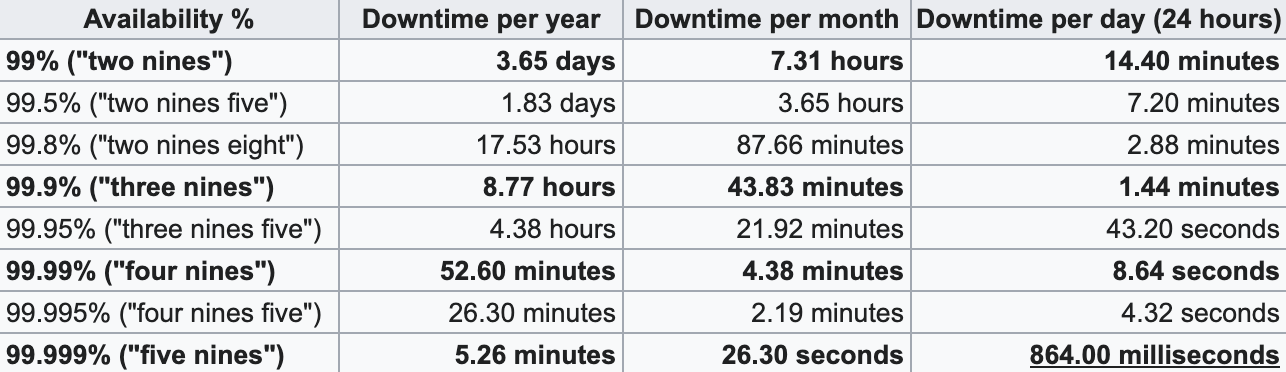

Sean said that no system can ever guarantee 100% availability. Instead, services list availability in terms of "nines." At 99% uptime, referred to as "two nines," means that it could be unavailable for up to 14.4 minutes per day. "Four nines," or 99.99% uptime, means the system might be unavailable for 8.64 seconds a day.

While 8 seconds a day might feel trivial to us at a human level, to a microservice trying to complete an order, this might mean total disaster. The question then becomes, what happens to the in-flight data when that service goes offline, even for a millisecond? What happens when that data is lost in a longer outage?

Active/Active vs Active/Cold

The choice in architectural solutions to system availability boils down to active/active and active/cold setups.

In an active/active system, you can use a load balancer to distribute requests between two or more live instances of your service spread across multiple availability zones. If one instance or zone goes down, the request is simply rerouted to another live instance, meaning nothing will be lost. This high availability comes with the cost of an exponentially growing hosting bill.

In an active/cold scenario, a clone of the active service is loaded into a server but only turned on once triggered. If the active service goes offline, then the cold copy is started. Depending on the configuration specifics, this could take seconds to minutes. This is a far cheaper option, as you will only pay for the running instances, but the chance of data loss goes up dramatically.

His real-world examples of where each makes sense are online poker tournaments versus online word search games. In an online poker tournament, having an always available connection is vital to keep the play fair and to manage the in-process games. In a word search scenario, only the final state or periodic status updates would need to be reported back to the game server, as most of the logic is executed on the user's device and is not time-sensitive. Ultimately, the larger the potential lost revenue, the more likely you should look into active/active architectures.

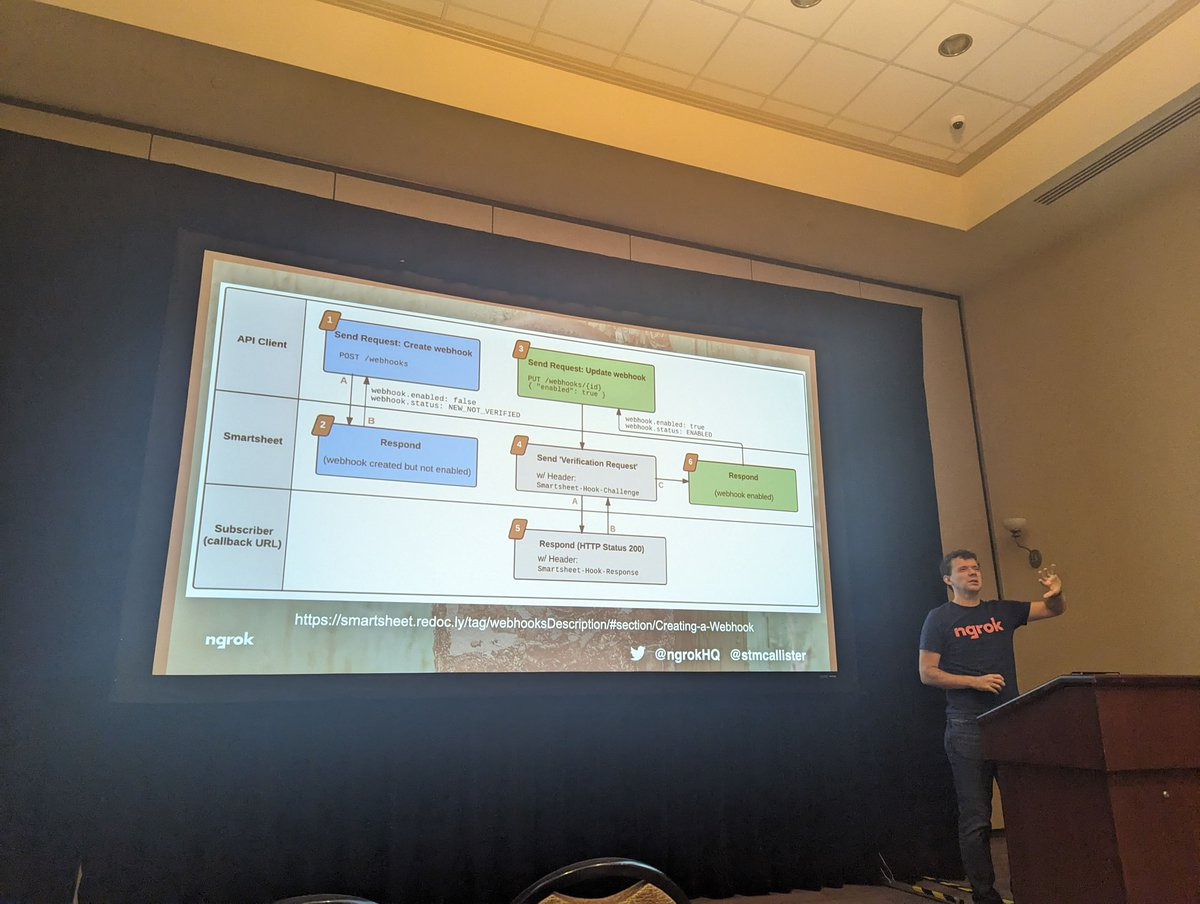

Webhook security matters

Armed with data from over 100 webhook providers, Scott McAllister, Developer Advocate at ngrok, presented "Simple Ways to Make Webhook Security Better." The data he cited is all available on Webhooks.fyi, both a directory of webhook providers and a collection of best practices for providing and consuming webhooks put together by the ngrok team.

Webhooks are a programming language agnostic approach for sending messages between distributed systems. They are great because they free developers from caring what language or on what platform a service is written, narrowing the concern to how to parse the JSON, or less frequently XML, reply. They are also easy to test and mock in our work. They also open a whole new set of security concerns, including interception, impersonation, modification, replay, and reply attacks.

Scott said using HTTPS when setting up your webhooks is mandatory, encrypting the message while in flight. He also laid out multiple popular strategies for webhook security:

- HMAC - Hash-Based Message Authentication Code. The provider signs the message using the secret key plus a hashing algorithm and includes the signature in the webhook request as a header. The listener receives the request, repeats the same steps, signs and encodes the webhook message using the secret key, and compares the results, looking for everything to match. This is considered a low-complexity solution that still offers adequate protection for most services. This is used by companies such as GitHub, Shopify, Slack, Twilio, and GitGuardian custom webhook integrations.

- Asymmetric keys - The webhook provider uses a private key to sign requests, while the listener uses a public key to validate webhook calls. This extends HMAC with a repudiation step that adds a lot of complexity. This method is used by companies like SendGrid, PayPal, and Keygen.

- mTLS - Mutual TLS. Both the webhook service and the listener go through a TLS handshake, presenting trusted certificates before the message is passed. While very secure, this is a very complex solution; this is the method used by PagerDuty, Docusign, and Adobe Sign.

There are other methods as well, but these are the most common. Unfortunately, according to their industry survey, the second most common answer for 'what method do you use for your webhooks' was reportedly 'none.' Hopefully, Scott and his team can inspire more developers to implement better security for their webhooks.

A community for all

The attendees at dev up 2023 got to see a wide variety of talks, aimed at all skill and experience levels, from introductory to advanced. While there certainly were many code examples and deeply technical sessions, there were also talks about career advancement and how to be successful working at home. No matter your background, everyone was made to feel welcome. The central goal all speakers had was to help their audience 'level up.'

Your author gave three different sessions as well, and helping people level up with code security was central to all. The first covered using git hooks to prevent yourself from committing secrets, as you can do with GitGuardian's ggshield. I also covered what to do if you experience a code leak, which unfortunately happens to almost all of us at some point. The final session, which sparked some really good conversations, was all about the Secrets Management Maturity Model. This model can help you judge where you are on your code security path and plan your route when used as a roadmap. We at GitGuardian would be happy to help you level up your code security posture through scanning for IaC misconfigurations, secrets detection, and intrusion or code leakage detection with GitGuardian Honeytoken.

There are a lot of folks out there who want to help you succeed as a developer or security professional. While events like dev up are a great way to connect, you don't need to wait for an annual event; there is likely an event in your area sometime soon. If you are looking for an awesome security community to join, we highly recommend checking out the OWASP meetings list or checking out their MeetUp group pages. Let's all get better at coding and code security together.