Austin, Texas, is the 10th largest city in the US and is constantly growing, both in population and in industry. Every year, dozens of major companies either relocate or expand into the Austin area. It is also home to six universities, like The University of Texas at Austin and Texas State. As the state capitol of Texas, many government agencies have a presence there as well. Folks from all these sectors came together in the last week of September to learn from one another at Texas Cyber Summit 2023.

Here are just a few of the highlights from this security-focused event.

AI is central in most security conversations

The theme of this year's event was Artificial intelligence, AI, and its evolving role in security. They picked the theme in November of last year, a little before the ChatGPT hype had really taken off. They very correctly predicted that almost all conversations about security tooling and automation would center on the subject of AI and Large Language Models, LLMs. This was certainly at the forefront of many conversations in the hallways and at social gatherings.

Almost every speaker had something to say about AI and LLMs, including Holly Baroody, Executive Director of the United States Cyber Command. In her keynote, "How Cyber Command is Shaping its Future through Innovation and Partnerships," she spoke about how her team is working to secure over 15,000 networks and over 3 million endpoints; they are leveraging AI wherever possible to our advantage. But she warned that our adversaries are doing the same thing, using AI to create new threats and exploits at an ever-increasing pace.

What AI means for cybersecurity

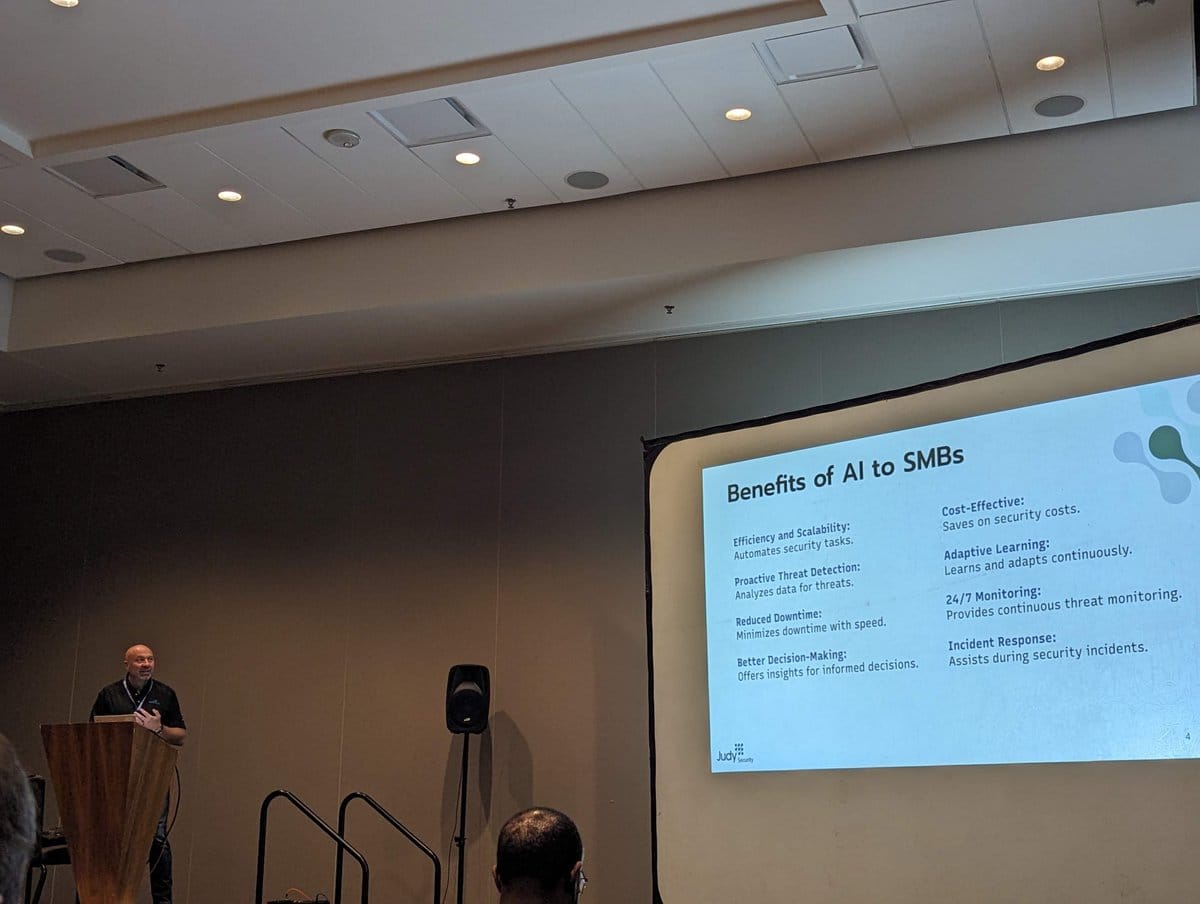

It might be easy to look at the advances being made with AI and assume you need to be a big company to reap the benefits. In his talk, "How will AI change the cybersecurity landscape?" Raffaele Mautone, CEO and founder of Judy Security, explained that small and medium-sized businesses, SMBs, stand to benefit significantly. AI helps in two significant ways: by making it possible to automate and enabling scale like never before.

One blocker that every company needs to overcome is the fear that AI will replace a specific role. The reality is that modern enterprises of all sizes simply have too much data for any one person or team to deal with. AI can supplement the knowledge base of any team for all the tasks they need to do, such as checking printer configuration for proper security settings while highlighting new and known vulnerabilities. AI models can be trained to spot phishing attacks and kick off your automated response plan.

We must see AI as a way to empower the 'good guys.' There are teams and products out there working on AI-powered threat detection, data analysis at scale, enhanced authentication strategies, and predictive analytics, just to name a few use cases. He thinks we can get to a world of automated patching and potentially generate security awareness training based on new reports and announced vulnerabilities.

GitHub is working on better security for all

Jose Palafox, Application Security Executive at GitHub laid out several ways that his team was working on improving security in his talk, "Securing open-source projects on GitHub.com." As the largest code hosting platform in the world, they see themselves on the front line in the fight to help people secure not only their own code but the entire software supply chain.

He said there are already a lot of alerts and scans out there, but the volume of false positive rates and noise have driven many developers just to tune them out. The context switching of investigating to investigate a new alert is often highly detrimental to their workflow, especially when there is no real action needed, so most warnings go unheeded.

GitHub has been working on the Security Lab initiative, finding new vulnerabilities and filing Common Vulnerabilities and Exposures, CVE, reports. They have just filed their 500th report recently. Jose said they can show a 95% fixed rate by maintainers once they are alerted.

Jose said the best step an individual could take is to turn on multifactor authentication, MFA, wherever possible. GitHub will enforce MFA to use the platform by the end of the year, something they have been warning folks about since 2021. They have mailed YubiKeys to over 500 organizations to make sure they are ready for this change and are staying secure.

Zero Trust requires applications that allow it

In his talk "The hole in your Zero Trust strategy - unmanageable applications," Matthew Chiodi, Chief Trust Officer - Cerby, said that 1 in 7 breaches are due to applications that can not be managed with an identity provider. According to IBM research, when done right, a Zero Trust strategy dramatically reduces the effects and cost of a breach. However, many organizations struggle to make Zero Trust a reality, with some mistakenly believing that MFA is good enough. In true Zero Trust, authorization is an ongoing process, not just done once at the edge when first authenticating, as with MFA.

The real challenge with implementing Zero Trust comes from what he called 'non-standard' applications, which he defined as ones that don't support single sign-on, SSO, security, and identification standards. The scope of the issue is very large. According to his research:

- 61% of the top 10,000 SaaS apps do not support SSO

- 42% do not support MFA

- 85% have corse grain RBAC only, meaning they offer admin and user roles but no way to define custom rules.

- 94% don't support a System for Cross-domain Identity Management, SCIM.

- 95% have no security APIs

While there is no clear single solution that will fit every organization, he wants to bring awareness to this issue. He also defined four basic steps we as an industry need to follow for better security regarding non-standard apps:

- Security organizations need to find a way to detect these apps

- Lobby vendors to extend identity provider capabilities to non-standard apps

- Automate security tasks like 2FA setup and user onboarding/offboarding to account for these apps.

- Report activity towards these goals.

Zero Trust throughout the SSC

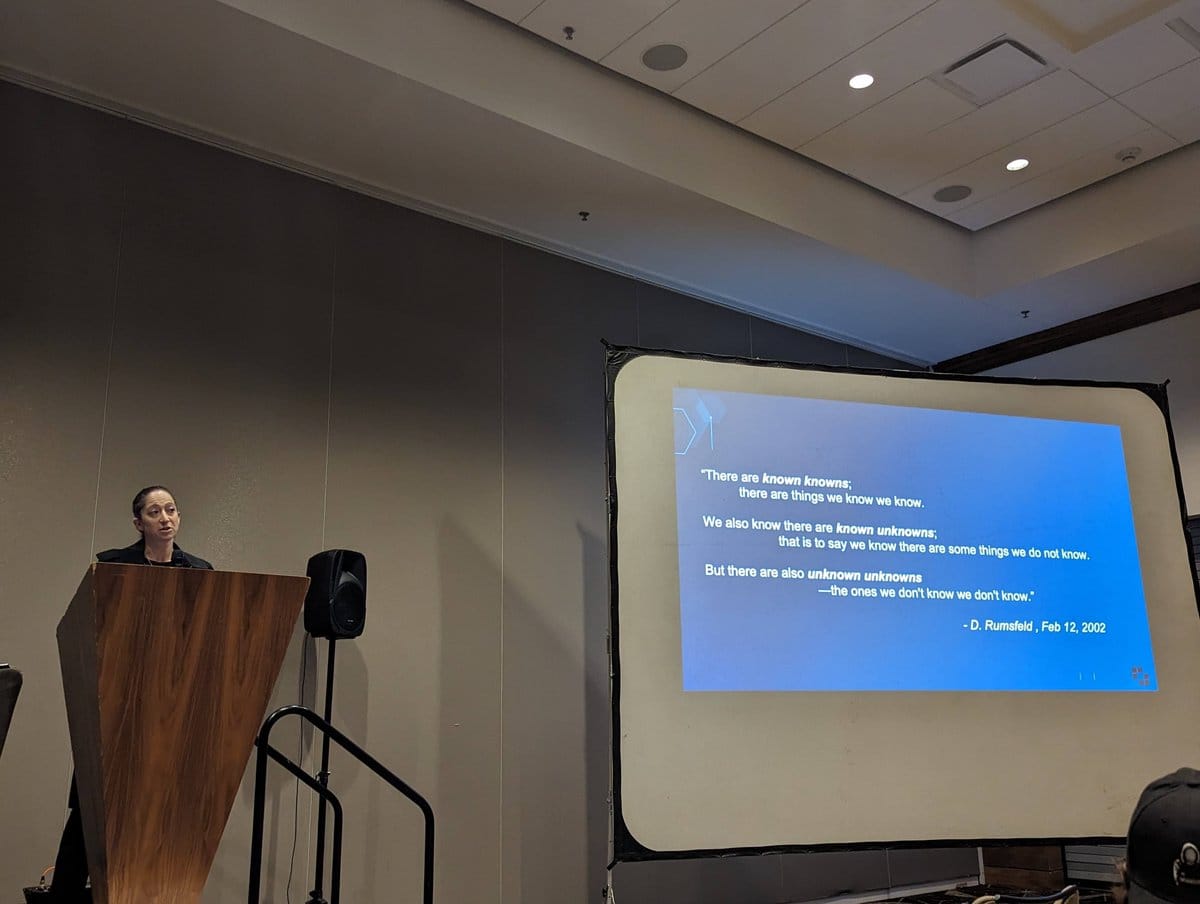

Zero Trust was another recurring topic across sessions. JC Herz Senior Vice President of Software Supply Chain Solutions at Exiger continued the theme in her session "Zero Trust in the Software Supply Chain." She said the world of software supply chains, SSC, and security is very much a war front and she cited what former US Defense Secretary Donald Rumsfeld once famously said, "There are known knowns; there are things we know we know. We also know there are known unknowns; that is to say, we know there are some things we do not know. But there are also unknown unknowns—the ones we don't know we don't know."

She walked us through the state of Zero Trust in the SSC, mapping it to these three knowledge categories. The "known knowns" are things like vulnerabilities and technical dept, which we should be dealing with. "Known unknowns" include entity resolution, the ability to map something to its true source, and supplier risks. There are also unknown risks of what happens to project maintenance, especially if a maintainer has no clear succession plan published. "Unknown Unknowns" are, well, unknown. Large-scale disruption in the SSC could come from big issues, affecting the whole marketplace or major providers. We must stay vigilant for emerging risks.

What we need to do to move ahead into a better Zero Trust future is demand it from our vendors. Make sure they are maintaining and updating their software to account for this architecture shift. She warned that often in her research she finds security tools to be the worst at this aspect. She said we should demand a vendor list from each supplier as well as a continuing support plan to ensure each component in your SSC belongs there. If an SLA is available, make sure they are living up to it.

What to do, and not do, in a ransomware attack

Donovan Farrow, Founder and CEO of Alias Infosec, presented multiple horror stories as well as some tales of success when dealing with ransomware in his talk "The Good. The Bad. The Hacked." The main issue for most companies experiencing a ransomware attack is they have no hands-on experience with incident response. As a result, many organizations tend to wait and handle the situation themselves at first instead of immediately calling in experienced teams that focus on this type of attack.

He defined the difference between a good response and a bad response, as well as ugly things he has seen in some extreme cases.

What does a good ransomware response involve?

- Having an Incident Response Plan in place and practiced.

- Contacting the correct team in a timely manner.

- Preserving all records and communications.

- Finding the exploit quickly and preventing future access.

- Moving as a team.

By contrast, a bad ransomware response means:

- No incident response plan is in place.

- Nobody knows who to call. Is it the vendor? Who knows the process and policy needed?

- "Go ahead and print that resume." Ransomware attacks are often also turnover events, with key team members finding other opportunities as the business might not survive.

- Act like there is no real threat until much too late.

Donovan shared some of the things he has seen working on some very egregious cases that you should avoid, "The Ugly":

- Deny anything has happened.

- Hire inexperienced attorneys who do not have a background in ransomware.

- Lie to your customers.

What Log4JShell has taught us about Kubernetes security

As most people will remember, Log4Shell was a major security event that shook the tech world. In their very technical session "The Platform Engineer Playbook - 5 Ways to Container Security," Eric Smalling, Sr. Developer Advocate at Snyk, and Marino Wijay, Developer Advocate at Solo.io, talked through some practical ways to prevent another such incident from impacting your container deployments.

The central issue with Log4Shell is that once a terminal is open on the remote host, hackers very often have full Linux privileges and tools at their disposal. Making sure anyone who could get access does not have full, unfettered access to all possible systems and tooling is a huge first step. It is also vital to understand how layers work at both build and runtime. You should leverage multi-stage builds that allow checks at various points along the build path. It is vital to use only known and repeatable image builds as well, which is what Chainguard works to provide. Only allowing certain tools and disabling the ability to install new ones after the build is complete would further harden your security.

Code scanning is a very vital step in the process. Aside from up-to-date versions and known vulnerabilities, scans should also check for misconfigurations. When setting config for a container, they shared a list of dos and don'ts:

- Don't run as root - You likely don't need it and it allows anyone with access complete control.

- Do use user UUIDs when granting permissions. It makes it harder to understand what role any user has.

- Don't use privileged containers. This would give access to all devices

- Do set `allowPriviledgeEscalation:` to `false`.

- Don't allow the full list of Linux tools.

- Do drop capabilities of a container to only what is needed.

- Do run the container with `readOnlyRootFilesystem:` set to true. This level of immutability makes exploiting your container harder.

You should be using a policy agent to make sure that these best practices are followed. They recommended looking into OpenPolicyAgent, Kyverno, and Pod Security Admission, which is built into Kubernetes.

Additionally, you need to worry about network traffic. Set ingress and egress rules, allowing traffic to only enter from known sources and only be directed to pre-set addresses. Any request should also be able to be authorized. If we leverage Zero Trust architectures, we should be refusing any requests that we can't authorize.

They concluded that proper container security means accounting for all of the following items:

- Code and container image scanning

- Understood best practices for container runtime config implemented

- Policy enforcement in Kubernetes

- Authentication and Authorization for containers

- Encryption and identification for all services

Git and OWASP

Your author was fortunate enough to present two different sessions. In my session "Don't commit your secrets: git hooks to the rescue," I was able to teach people some git basics and how to keep secrets out of your repos with git hook. Setting up the right git hooks to do this is very quick with ggshield, the GitGuardian CLI.

The second session was all about OWASP, called "App Security Does Not Need To Be Fun Ignoring OWASP To Have A Terrible Time." GitGuardian is proud to sponsor several OWASP projects, including the Austin OWASP chapter. The session focused on the Application Security Wayfinder and the offerings that the community has mapped to each stage of the software development lifecycle. My goal is to make people aware of available OWASP tools, with the hope of making it easier for developers and security teams to collaborate.

Improving as a community

With over 1300 attendees, this year's Texas Cyber Summit brought together a wide variety of folks. Backgrounds ranged from the NSA and CYBERCOM to industry leads like Snyk and GitHub and welcomed students and folks looking to get started in cybersecurity. The goals we all had in common were to learn, share our knowledge, and grow as a community. This year the focus was very well timed, as AI has been at the forefront of so many conversations. I can't wait to see what theme the Texas Cyber Summit community picks for next year and the conversations that happen about it.

No matter where you are in the world or on your security journey, we are all in this together. If you need any help navigating the world of Secrets Detection, Infrastructure as Code security scanning, or intrusion and leak detection, as we offer through GitGuardian Honeytoken, we would be glad to help. We can even help you figure out where you are on your secrets management maturity journey through our questionnaire.