Many cybersecurity professionals have been following Anthropic's announcement about the release of Claude Code Security on Friday. This created the beginning of a panic on the cybersecurity stock market. It also raised a lot of questions from domain experts, investors and security enthusiasts.

Anthropic's announcement

Anthropic introduces Claude Code Security: a tool that scans full codebases for security vulnerabilities, and can propose fixes directly in developer workflows. The tool leverages the latest foundational model's reasoning capabilities to provide a new experience.In a world where code will be generated only by AI, this can sound very much like code security is dead.

Our vision

18 months ago, SAST, SCA, and IaC security were areas where we had real traction and could see ourselves expanding. But as AI tooling started reshaping how code gets written, we made a tough call. We decided to stop these initiatives and go all-in on what we believed would matter most: Protecting enterprises against leaked secrets and mismanaged NHIs.

We envisioned a future where identity is crucial for the AI era security, with secrets enabling AIs to access data and take actions. After pioneering in secrets detection for years we witnessed how amplified the problem became as LLM emerged: more API keys for AI services, more code generated, often less secure, more agents requiring sophisticated access to a myriad of tools. All in all, this resulted in more secrets exposed. Yet the problem of overseeing and managing these secrets in a secure way remains unsolved.

The paradigm shifted from human hardcoding secrets in their code, to AIs having wide access levels on several systems with humans, coders and non-coders, prompting them and creating new vulnerabilities.

18 months later, let me describe where we stand.

What isn't changing

Best in class secrets detection

GitGuardian is the leader in secrets detection. We are the only solution able to scan large volume of data at scale (50 MB/s per core at negligible cost) in addition to +30 different data sources (ticketing systems, messaging apps, container registries, …).

Our latest State of Secrets Sprawl report (will be released in March) clearly shows that AI-assisted coding has boomed over the last 12 months, with the number of AI generated commits growing a 10x factor over the past year. We also see that these commits used to contain more secrets in the first half of 2025; however, the number of secrets per commit started decreasing at the end of the year, probably reflecting the improvements of foundational models.

Remediation is where the real work begins

Our value proposition is very different from static analysis, or vulnerabilities in dependencies. Remediating a secret exposure is a hard problem: One that involves manual actions and human control. It's not just about fixing the code. It's about not breaking production, mapping the infrastructure, assessing the blast radius of a secret rotation and coordinating this effort across your org.

GitGuardian has been spending the last 7 years exploring and pioneering in this area.

Because secrets are not only a vulnerability when they are exposed but also when they are mismanaged, a year ago, we released NHI governance: a product specifically aiming at providing a 360 view of how your secrets are spread and used in your org. We're not only looking at leaked secrets, but thanks to ggscout, we are able to also observe the dissemination of your secrets across servers, apps and vaults with context.

You cannot manage what you can't see. You need this inventory to remediate leaked secrets and spot weaknesses in your organization. Secrets used across multiple environments, overpermissioned, not being rotated, exposed publicly or sprawling across all your vaults.

Enterprises are not looking for point solutions

One key learning we have seen in the past is the importance of being able to orchestrate your identities security, at scale. Serving Fortune 500 enterprises for the past 7 years has taught us that point solutions are not enough. Our customers are looking for a unified experience with several key capabilities.

Think integrations with multiple data sources, notifications, aggregated analytics and metrics, RBAC, users management, fine tuning and customization.

This is what we are proposing at GitGuardian.

Emerging threats, new horizons

In the past months, GitGuardian has been pushing in several directions to provide an even better security posture as the attack surface grows.

We believe that endpoint security becomes critical. Developers' laptops are the next frontier for attackers. We've seen the emergence of sophisticated attacks (such as NX/s1ngularity or Shai-hulud) that specifically took advantage of machines weaknesses.

This is why we built our local scanning and identities inventory tool. This solution is the ultimate tool for security engineers to get a clear understanding of which machines hold which secrets. You can deploy it across your workforce via an MDM, and GitGuardian will re-surface all the weaknesses and points of attention: overprivileged credentials, production credentials ending up on a developer's laptop.

In the past 12 months, we have also worked hard on agentic security. Managing your attack surface has become THE biggest challenge for security engineers in the AI era.

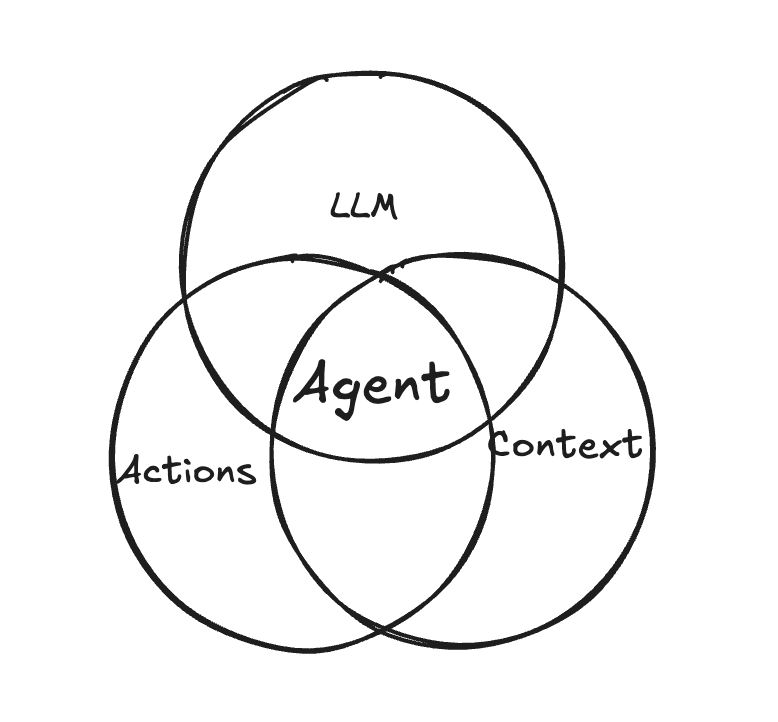

I love to think of agent as LLM + Context + Ability to perform actions. Your agent will be more and more powerful as each of these dimensions increase.

The problem is: both context and ability to perform actions require authentication. How do you secure these?

On top of that, and to get a sense of the scale we are talking about: AI does not enter your enterprise in a perfectly supervised manner. Shadow IT, experimentations launched in all your departments by all types of profiles, third party tools being used by your SaaS providers make the agentic footprint bigger than what you think.

All in all, having a 360 view over your agents and identity security is harder than ever. This is what we are committed to achieving at GitGuardian.

Because of these concerns regarding endpoint security and managing the attack surface, we are also doubling down on our historical HoneyTokens offering to proactively detect breaches. We are now able to support a handful of custom providers, and to disseminate these traps at scale in your organization.

"The road ahead"

Anthropic describes the road ahead, how attackers benefit from AI, and how Claude Code Security will make code bases more secure. We are living exciting times for sure. But remember: Hackers don't break in, they log in.With AI generating more and more code, and models being trained to generate safer code, the attack surface is different. But different does not mean smaller; it now spans far beyond code. GitGuardian and all our teams are committed to making AI a safe place. This starts with securing your non-human identities and secrets across your whole organization.