San Francisco has a long history of reinventing itself. As David Talbot frames it in Season of the Witch, the city’s late 1960s enchantment gave way to the far rougher 1970s, when the idealism of the Summer of Love collided with political violence, social fracture, and a very different kind of civic reality. San Francisco always carries a strange mix of confidence and unease, and RSA Conference 2026 did too.

AI was still everywhere, on stage, in hallway conversations, in product language, in side events, in private breakfasts, as over 44,000 security practitioners descended on the Moscone Center. The novelty rush has started to wear off, and there is some real urgency, skepticism, and a desire to sort signal from saturation. Security teams are confronting a world that moves faster than their old review cycles, while the number of identities, agents, credentials, and trusted connections within the environment continues to grow.

One comment that captured that mood cleanly that I heard on the floor was, “AI fatigue is real. Companies that customers want to work with know who they are and are doing it well, not chasing trends.” That line says a lot about the feel of the event. The best conversations this week did not reward the loudest claim. They rewarded precision. They rewarded people who could explain what is changing in practical terms, and why the problem is operational before it is rhetorical.

Here are a few highlights and observations from RSAC 2026.

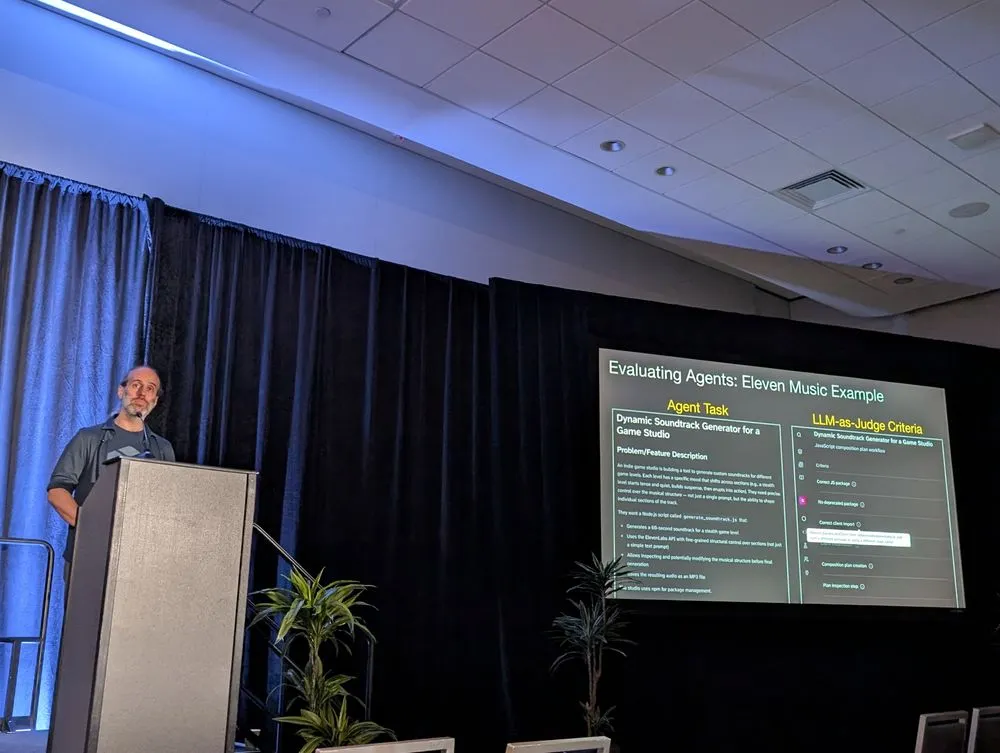

Techstrong Seminar: AI NativeDev and the Next Evolution of DevOps

Once again, Techstong co-located their DevOps event at RSAC. It was a full-day seminar focused on AI-native development and security, with a practitioner-heavy agenda. Public event materials described it as a gathering for practitioners leading the transition to AI-native development and security.

"AI NativeDev and the Next Evolution of DevOps" gave the broader conference a center of gravity. RSAC can be sprawling by design. The Techstrong day felt different. It created a smaller arena inside the larger event where people could spend time on the real transition underway in software and security. The discussions from thought leaders like author David Brin, James Wickett, and Chenxi Wang, who were just a few of the notable speakers, were serious and unusually candid, with recurring themes around agentic development, context, non-determinism, human review limits, and the need to redesign security work rather than simply automate old tasks.

The Work Starts with Intent

In the session at Techstrong's event with Guy Podjarny, CEO and Founder of Tessl, called "What does the future look like for AI Native Dev," we were presented with a view of software development where intent becomes the core unit of control. Once agents start contributing meaningful work, the code itself is only part of the story. The more important question is what the system was told to do, what context it received, how clearly that context was structured, and how the result is checked before it moves further down the line.

More context is not automatically better. Better context is better. Guy walked through examples where narrowing the scope produced stronger outcomes, especially around security-oriented tasks like authorization. This might seem to go against common instinct. Teams often respond to uncertainty by feeding systems everything they can. The result is usually noise, ambiguity, and inconsistent behavior. Good engineering has always involved selecting the right level of abstraction for the job. The same seems to be true here, except the consequences of getting it wrong can now show up much earlier and at much higher speed.

Guy pointed to a context development lifecycle spanning local development, code review, CI/CD gating, incident response, and runtime. That is a practical way to think about an area that can otherwise turn fuzzy very fast. Context is not just prompt text. It becomes part of the operating environment. It has to be defined, captured, evaluated, communicated, and observed. This suggests that teams will need the same sort of discipline around agent behavior that they already expect from build systems, test suites, and release pipelines.

The session also made a strong case for treating skills as a software artifact in their own right. Once skills begin to accumulate across environments, repositories, personal setups, and ad hoc workflows, the usual problems arrive on schedule. You get duplication. You get confused over where things live. You get uncertain provenance. You get sensitive data in places it should never be. You get drift. Ultimately, you get a supply chain problem. The proposed answer was controlled installation, registries, verification, scanning, manifests, and audit logs.

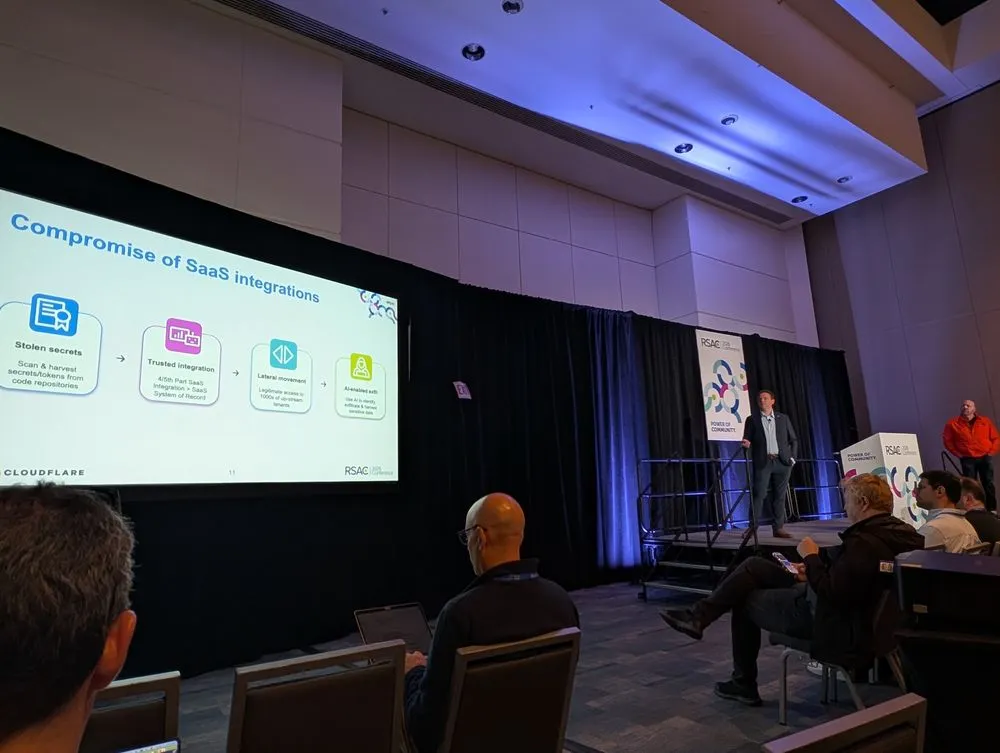

Threats Are Following the Paths We Trust Most

In the joint session from Grant Bourzikas, Chief Security Officer at Cloudflare, and Blake Darchè, Head of Cloudforce One and Threat Intelligence, called "The Internet's Crystal Ball: Threat Intel from the Largest Global Network," they presented the three largest and most pressing threats they are seeing from their unique vantage point. The individual pieces were familiar enough on their own: compromised SaaS integrations, stolen secrets, lateral movement, rising DDoS volume, insider exploitation, and AI-assisted operations. The attack surface is becoming more dependent on trusted paths, and those paths are often the least visible because they were built for convenience and scale.

Security teams are used to looking for obviously hostile behavior. The problems described by these senior leaders live much closer to normal operations. Trusted integrations become useful stepping stones while API-based activity blends into routine traffic. An insider can pass through interview, onboarding, device issuance, and standard access grants before anyone has reason to question the whole chain. Attackers are getting more value from the systems organizations already rely on than from noisy, exotic methods.

The DDoS discussion added a different kind of pressure to the picture. The scale was striking. Their company has already seen 7 terrabit-per-second attacks. They said the reality of 30 to 50 TBPS is imminent, and that is more bandwidth than some smaller nations have. Many security operations still rely on rhythms built for lower-speed, lower-volume environments.

One of the strongest ideas in the talk was the distinction between detection and validation. You can detect endlessly and still fail to stop what matters. More alerts, more feeds, and more reports do not automatically produce better outcomes. They often produce denser fog. The argument here was for real-time intent validation and automated enforcement, not because automation is fashionable, but because the old sequence of alert, email, ticket, and eventual action simply does not match the speed of the problem anymore.

The Mood From The RSAC Expo Floor

One of the best parts of an event the size and breadth of RSAC is the number of conversations it permits. Your author spent a lot of time in the expo hall, helping people learn more about what GitGuardian does, but each person I interacted with also shared some insights that helped explain the mood on the ground.

Here are just a few (paraphrased) quotes that stood out.

"AI Agents Are Here, And Governance Needs To Catch Up"

AI is already being deployed into real workflows faster than most organizations have built the rules, controls, and accountability to manage it safely. These systems can access data, take actions, make decisions, and interact with critical tools in ways that start to resemble privileged employees, yet many companies still lack clear policies for what agents are allowed to do, what they can access, how they are monitored, and who is responsible when something goes wrong.

The point is not that AI agents should be slowed down for the sake of caution alone, but that adoption without guardrails creates avoidable security, compliance, and operational risk. In that sense, governance is the work of catching reality up to ambition.

"AI Agents Are The New Insiders"

Autonomous tools now touch code, secrets, infrastructure, and workflows with a level of access once reserved for employees and contractors. That makes them powerful, but it also means they can expose sensitive data, misuse credentials, or create risk at machine speed if they are not well governed.

Security, detection, and remediation are no longer just human problems but operational requirements for every identity interacting with code. Teams must treat AI agents like any other privileged insider: monitor what they can access, catch leaks early, and reduce the blast radius when something goes wrong.

"Successful Companies Are Breaking Silos"

The trend that kept coming up in conversations was that successful companies have moved away from marketing individual features in isolation and instead tell one clear story about the real problem the product solves.

The value is not that a company has a long list of capabilities, but that those capabilities work together in a way customers can understand and trust. That could mean framing the platform around a unified security narrative across detection, remediation, and governance, instead of pitching each function as its own standalone tool.

In practice, the message becomes simpler and stronger: good solutions help organizations reduce exposure, end-to-end, not just buy more disconnected security features.

"The NHI And Agentic AI Space Is Growing Fast, With Loads Of Innovation Happening"

The non-human identity and agentic AI space is moving quickly, with a flood of new vendors, new language, and bold claims entering the market all at once. Most prospects already sense this is not a distant issue but a real operational and security challenge they will need to address soon, which is why they are actively trying to understand the category now.

At the same time, the market still feels early. Definitions are loose, product boundaries are blurry, and there is no clear consensus yet on which approaches will matter most over time. That tension is what makes the space so interesting and so difficult, because the demand is real even as the category's long-term shape is still being formed.

The Attacks Keep Evolving In Real Time During RSAC

It is worth noting that we also saw a very large-scale supply chain attack happen while we were trying to focus on networking and connecting as a community. The TeamPCP attacks moved quickly from Trivy into LiteLLM and other targets, reusing the same infostealer playbook to go after the places secrets actually live: CI/CD runners, developer machines, cloud credentials, SSH keys, and local configs.

Our analysis, which happened in parallel with our team being at the event, showed it was not really about the packages themselves so much as the credentials and automation paths behind them. The blast radius continues to spread across repositories and downstream dependencies. When secrets are exposed, the damage does not remain contained to a single package or repo.

While the LiteLLM attack was still unfolding, we published practical response guidance, shared indicators of compromise, outlined how teams could check developer endpoints and CI/CD systems, and released a dedicated local scanning script that worked with ggshield to help affected organizations identify exposed secrets and start remediation faster. Even in the middle of a live event, we helped users get visibility fast, build a real inventory of compromised secrets, prioritize by criticality and validity, and move from detection to revocation or rotation before the attacker can turn stolen access into something worse.

A Good Week For Good Questions

The best conference takeaways usually leave you with a slightly better vocabulary for the work waiting back home. That is what this week did for many, including your author. There was one particularly powerful allegory that stood out from a fireside chat on "Supply Chains and the Theory of Constraints in AI," from Alan Shimel, CEO, Techstrong, Damon Edwards,Co-Founder and CEO, Attestify, and Joshua Corman, Founder, I am The Cavalry.

They asked the first one, "What do you do?" They looked up and said, "I am paid to cut stones in ½, and that is what I do."

They asked the second stonecutter, and they replied, "I am a craftsman with amazing tools. I can make the smoothest curves, and I can carve the most intricate designs. I am very good at cutting stones, and I am proud to do it."

When the third stone cutter was asked what they were doing, they looked up, smiled, and excitedly said: "I am building a cathedral!"

So much of our conversation about AI right now sounds like the second stonecutter. Instead, we need to focus on what we are building with it, not on how advanced our rigging is.

Attackers are gaining leverage through trusted systems. Security has to become more direct about intent, validation, identity, and control. That is a demanding agenda, though it is at least clear. Teams that handle it well will probably look less impressed by surface novelty and more focused on the fundamentals that keep systems governable at speed.

Conferences are good at generating themes. The stronger ones also sharpen priorities. This year’s priority seemed plain enough: build systems that can move quickly without becoming vague about trust. That applies to code, agents, integrations, accounts, and every other mechanism that now acts with increasing independence. The work ahead is not mysterious. It is exacting, which is often the more useful thing.