Lapsus$ has continued its prolific pace of breaches now leaking internal source code from 250 Microsoft projects which the group has claimed is 90% of the source code for Bing and 45% of the source code for Bing Maps and Cortana. This comes after publicly leaking source code from Samsung and Nvidia and also claiming to have breached Vodafone, Okta, Ubisoft, and Mercado Libre.

While Lapsus$ is also targeting admin accounts, we know that they have a large focus on targeting the private source code from companies. Not only is this source code valuable for discovering vulnerabilities in the applications, but also because it frequently contains sensitive information like secrets.

How are Lapsus gaining access to internal source code

While it is not certain how Lapsus$ gained initial access, Microsoft stated in their own post on the group that they have noticed several techniques being used.

-Deploying the malicious Redline password stealer to obtain passwords and session tokens

-Purchasing credentials and session tokens from criminal underground forums

-Paying employees at targeted organizations (or suppliers/business partners) for access to credentials and MFA approval

-Searching public code repositories for exposed credentials

Source

Microsoft also said that the group (they call Dev-0537) has been using personal accounts of employees who may have leaked corporate credentials, for instance on employees' personal public Github repositories.

"In some cases, DEV-0537 first targeted and compromised an individual’s personal or private (non-work-related) accounts giving them access to then look for additional credentials that could be used to gain access to corporate systems."

Source

This gives us a clearer understanding of how Lapsus$ is gaining access to organizations and it is clear there is a relationship with secrets, either being leaked publicly, purchased from an actor who should not have access to them, or stolen using malicious tools.

While Microsoft has claimed there has been very limited damage from the attack, stating:

"No customer code or data was involved in the observed activities. Our investigation has found a single account had been compromised, granting limited access."

Source

They also stated that source code secrecy is not fundamental to their security approach.

"Microsoft does not rely on the secrecy of code as a security measure and viewing source code does not lead to elevation of risk."

Source

GitGuardian analysis of Microsofts source code

After reviewing it, GitGuardian has found that Microsoft source code shows a clear focus on security. Nevertheless, there is still a lot of sensitive information found within it.

Following the leaked source code from Samsung, GitGuardian provided an analysis on the number of secrets (API keys, security certificates, credentials…..) that were present inside the repositories. We have also conducted the same analysis on the Microsoft source code.

In total, we found 376 secrets inside the leaked projects from Microsoft (click on the detector name to go to its documentation page):

While this is still an alarming amount of secrets to be discovered, it is approximately half what we would expect to find in a comparable security-focused organization. This shows that Microsoft does have actions in place to ensure sensitive information isn't in source code but also shows that even in the most security-conscious company, leaked secrets remain a significant problem.

GitGuardian has a policy of never validating if the keys from a public breach are currently active so we do not confuse forensics from the victim trying to confirm if keys have been used. We cannot, therefore, confirm what keys are currently active, or were active at the time of the breach.

Types of secrets found

Most of the keys we reviewed showed the characteristics we would expect to see with secrets that are being used within an application and therefore believe many would have granted access to Microsoft's internal systems and infrastructure. How critical these systems are and if Microsofts other security policies may have limited access isn't known, but these are certainly valuable assets for Lapsus$.

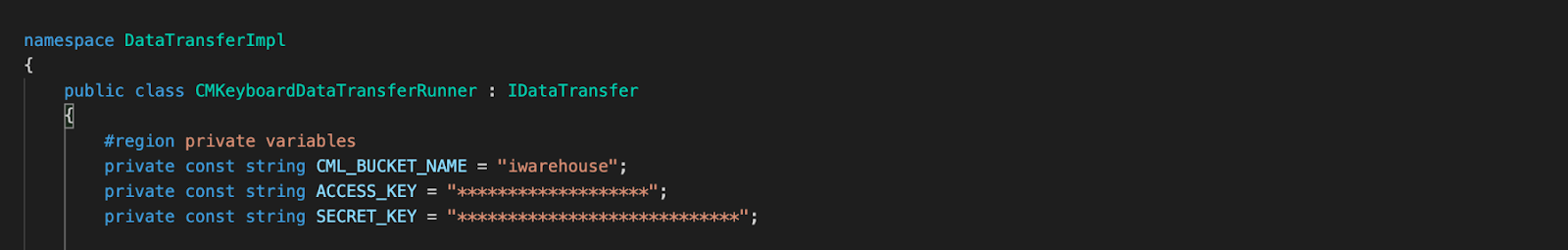

AWS keys

5 AWS IAM keys were discovered in the source code, below is an example of a key uncovered in a third-party data transfer project.

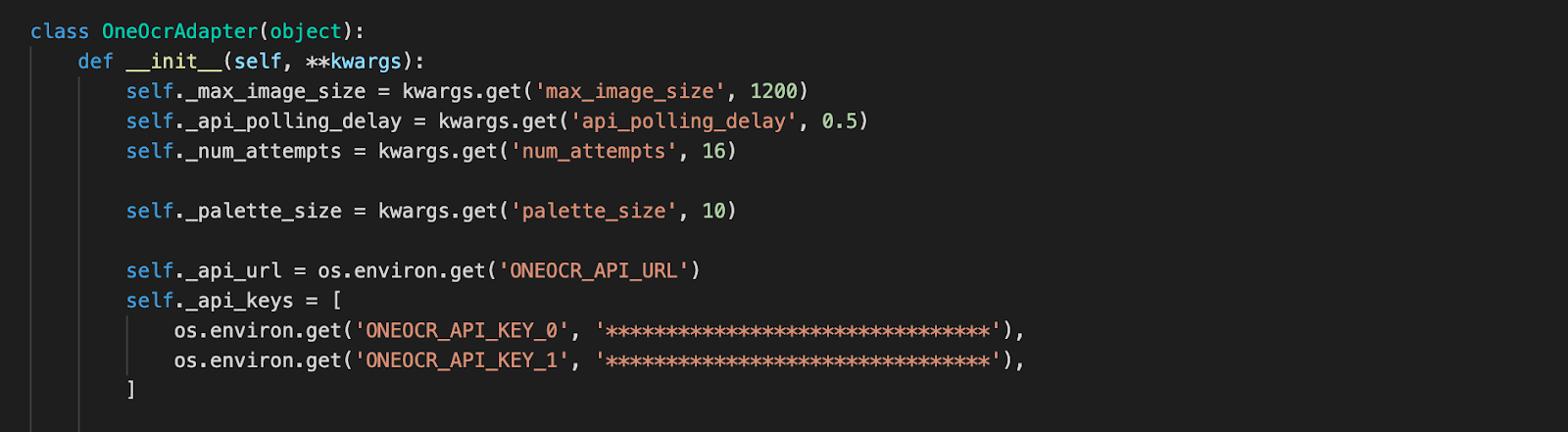

Azure Subscription Keys

An Azure subscription key was found in a python file. There are multiple different API’s that are provided by Azure for example Bing search, translation, speech recognition, etc. These can come with different levels of criticality but even uncritical keys can still be used to block services and use significant resources.

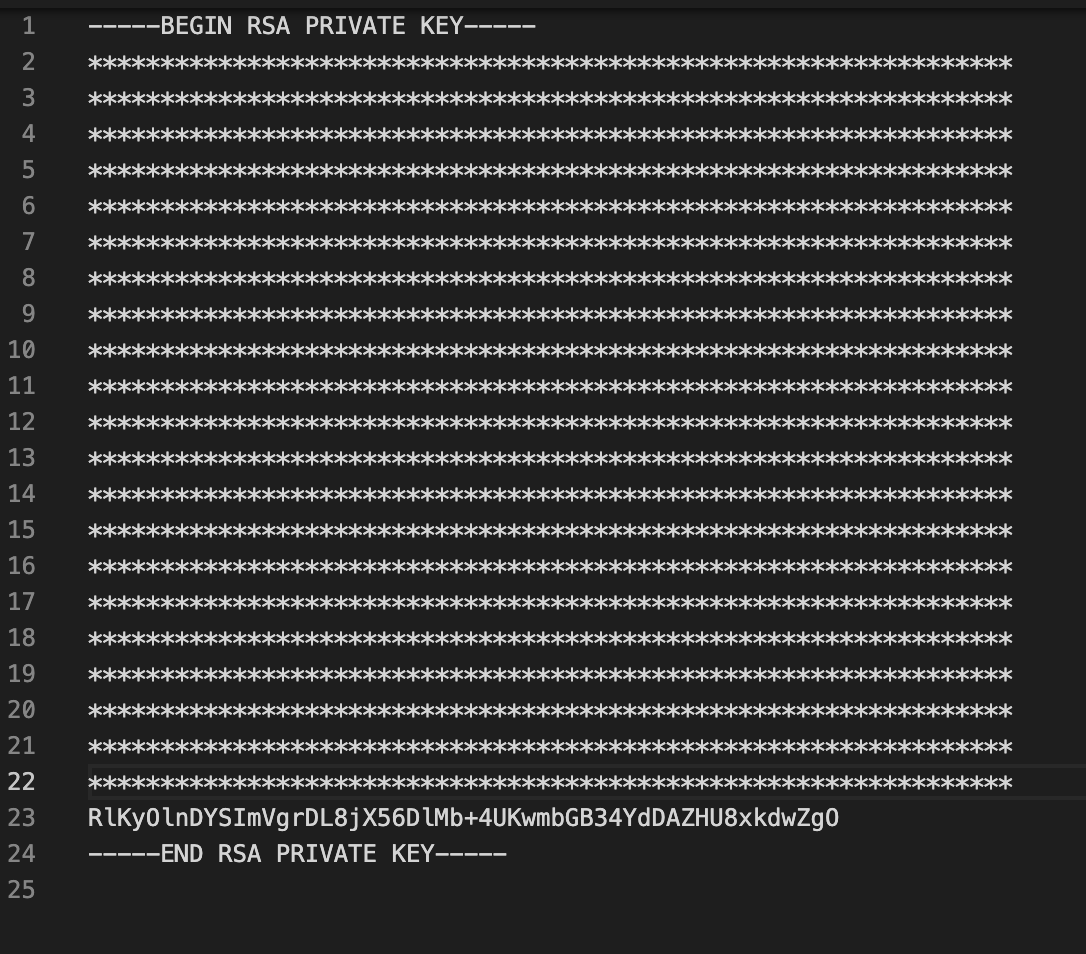

RSA private keys

3 RSA private keys were found, this is an example of one found in a sensitive .pem file. These keys can be used to decrypt data or be used to fake the authenticity of data.

Credential Pairs

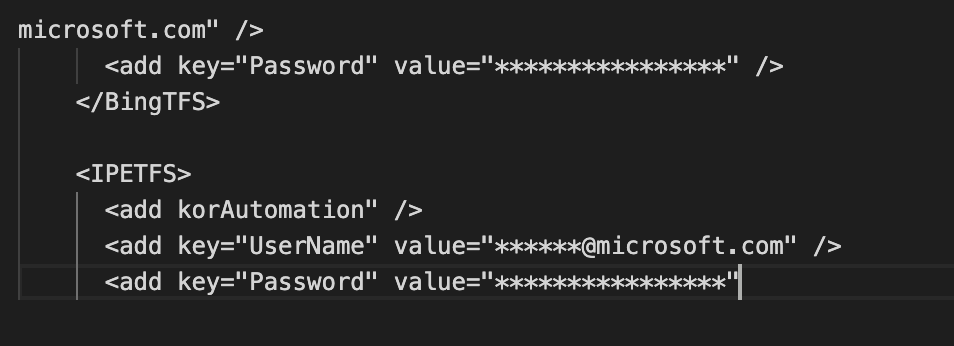

We also found many username password pairs such as this example found in an application configuration file. These credentials can be used in a variety of services such as accessing databases.

Why do sensitive files exist in source code?

Central source code repositories are accessible to a huge number of developers within organizations the size of Microsoft. But when you consider this huge number of developers, plus the unforgiving nature of Version Control Systems such as git, mistakes frequently occur where sensitive information is committed despite being a universally agreeable poor practice.

GitGuardian's State of Secrets Sprawl report highlighted what a challenge it is for application security engineers to manage leaked or sprawled secrets inside code repositories.

On average, in 2021, a typical company with 400 developers and 4 AppSec engineers would discover 1,050 unique secrets leaked upon scanning its repositories and commits. With each secret detected in 13 different places on average, the amount of work required for remediation far exceeds current AppSec teams capabilities (1 AppSec engineer for 100 developers)*.

Source

While Microsoft source code contains significantly fewer secrets than comparative organizations, this shows that even the most security-focused company with significant resources for security still has sensitive information in their source code.