Supply chain security has moved closer to the humans with hands on the keyboard.

For years, security teams have treated production systems, CI/CD pipelines, and identity infrastructure as the most sensitive parts of the software lifecycle. That is not wrong, but it is incomplete. The developer workstation belongs in that same conversation because it sits at the intersection of privilege, trust, and execution. It is where code is written, dependencies are installed, credentials accumulate, and trusted actions begin.

Modern supply chain attacks are increasingly designed to land on the developer machine first. They do not need to smash through the front gate of production if they can quietly collect the keys from the laptop that already has access to private repositories, package publishing workflows, cloud consoles, build systems, and internal tooling.

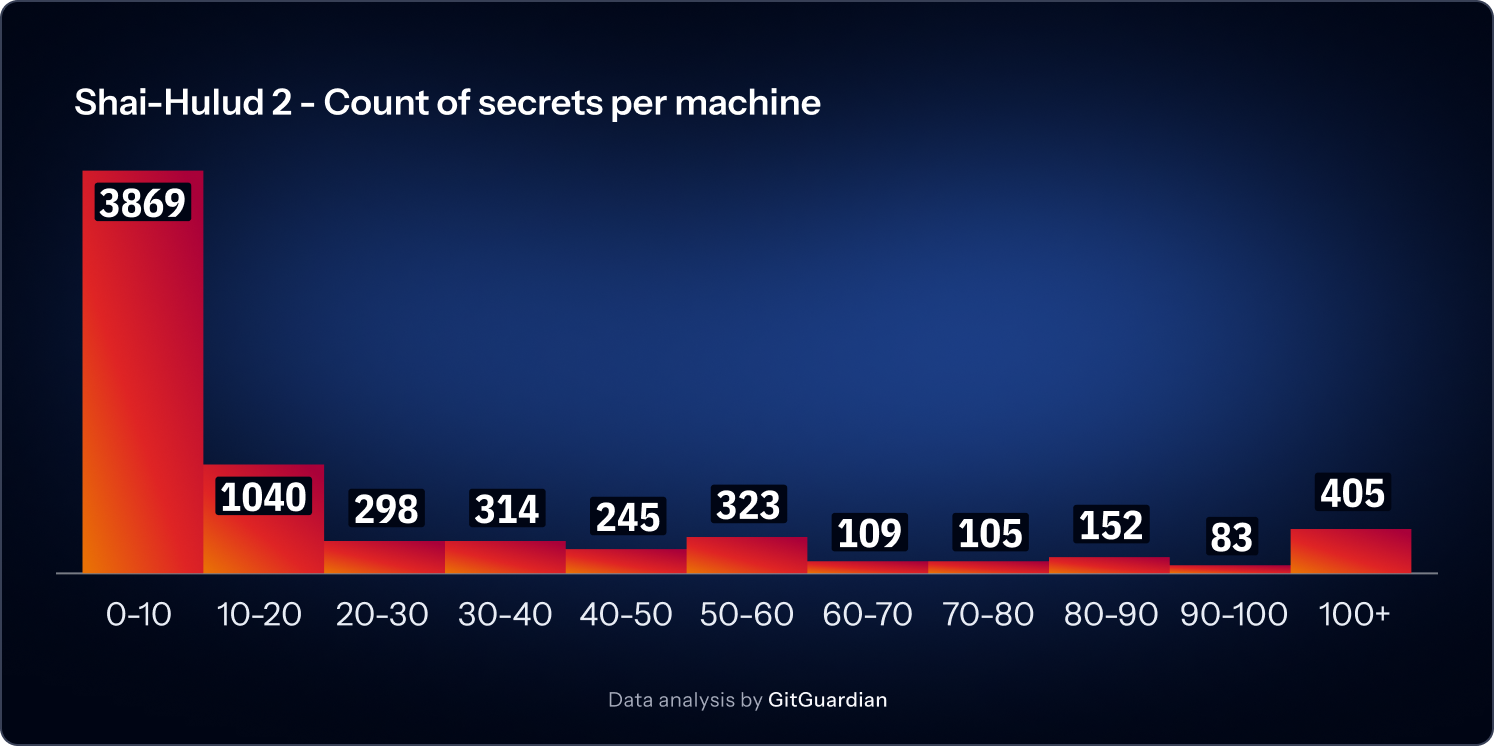

In 2025, and for the first time, campaigns such as Shai-Hulud showed us publicly just how many credentials could be harvested from a developer machine. In the 2026 State of Secrets Sprawl report, we showed that across 6,943 compromised machines in that supply chain attack, we found 33,185 unique secrets. At least 3,760 were still valid when we initially checked.Now a growing class of agentic AI attacks aimed at local credentials and developer context shows the same pattern. The shortest path to enterprise impact often starts with developer access.

Developers have always been attractive targets. What has changed is the speed, scale, and plausibility of the attack paths. Poisoned packages, malicious plugins, compromised updates, and AI-assisted local automation all make it easier for adversaries to reach into a workstation and search for anything useful. That search is usually not abstract. It is practical. It looks for API keys, cloud tokens, SSH material, npm credentials, GitHub tokens, secrets in environment variables, plaintext config files, `.env` files, shell history, logs, caches, and agent memory stores.

The perimeter has not disappeared. It has become easier to recognize. The real perimeter is wherever the most privileged identities can be reached and abused. In many organizations, this includes the developer machine.

Why developers should care

Most developers do not think of themselves as part of the security boundary. They write code, and IT manages the laptop, while Security owns the policy. That division makes sense until an attacker uses a developer workstation as the easiest path into the systems that the developer already touches every day.

This is why workstation security should not and cannot be framed as a request for every developer to become a full-time security engineer. The practical goal is smaller and more useful. If you reduce the chance that your machine becomes the easiest place to steal high-value access, you are also reducing real risk for your organization.

That access is valuable for two reasons. First up, developer systems often hold secrets and tokens with real privilege. The second reason is that actions that originate from developer environments inherit trust. A package published from a maintainer machine, a commit signed with trusted credentials, a dependency update, a cloud login, or access to an internal support tool can all carry institutional trust that attackers would love to borrow.

That is why the workstation deserves the same level of scrutiny and controls we already give to production systems.

The immediate problem is plaintext secrets

When attacks land on a developer's machine, they do not need to perform magic. They need to find useful material quickly. Too often, that material is sitting in plaintext.

Secrets end up in source trees, local config files, tests, debug output, copied terminal commands, environment variables, shell profiles, AI tool configuration, and temporary scripts. They very commonly end up in `.env` files that were supposed to be local-only but quietly became permanent if not shared. Convenience turns into residue, which in turn becomes opportunities for attackers.

That is why one of the clearest next steps for developers is also one of the least glamorous: eliminate plaintext secrets from the workstation wherever possible.

Replace hardcoded credentials with calls to approved secret managers. Move local secrets into the system keychain or an enterprise-approved password manager. Encrypt files at rest when secrets must exist in files at all. Use tools such as SOPS where that workflow makes sense. Better yet, move away from shared static secrets entirely and adopt identity-based authentication wherever feasible.

The goal is reducing the amount of value an attacker can extract from any successful foothold.

The hard truth about remediation

The most correct security answer here seems straightforward: reduce the use of plaintext secrets and move to stronger authentication. At the same time, we need to harden the workstation itself and standardize approved tooling. Slowing down dependency risk is another priority, while detecting abuse earlier is what security teams need for auditability.

None of this happens instantly.

Even good remediation plans take time because they touch real workflows. Changes require updates to training, tooling, access patterns, and team habits. Replacing a secret in code is easy on the surface. But replacing the workflow that caused ten copies of that secret to spread across laptops, config files, and build jobs is harder. Moving a team from local environment variables to a stronger secret retrieval model is possible, but it is not a same-day project. Moving from secret-based access to identity-based patterns takes longer still.

Attackers do not pause while those projects are underway.

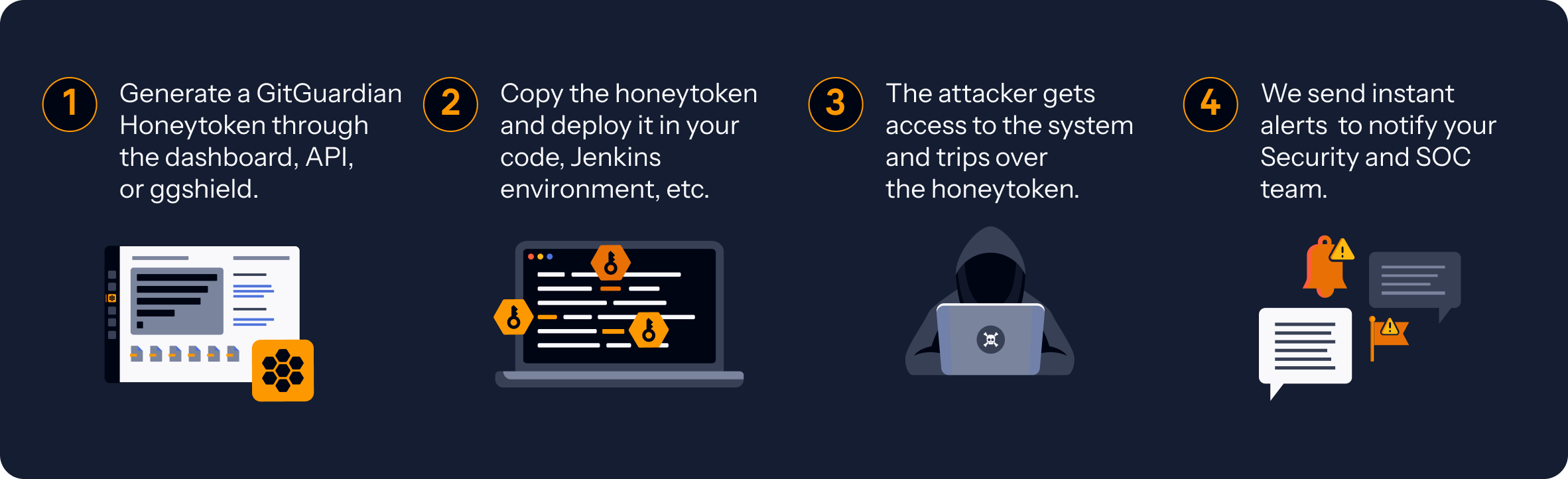

That gap between knowing the right long-term direction and living in today’s imperfect environment is where Honeytokens make sense.

Why honeytokens belong on the developer workstation

Honeytokens are not the end state. They are the first compensating controls that help while cleanup is still in progress.

Honeytokens do not prevent workstation compromise. They do not replace hardening, secret elimination, or better authentication. What they do is give defenders a way to detect malicious secret harvesting as it happens.

A honeytoken is a decoy credential designed to generate an alert when someone tries to use it. On a developer machine, that makes it useful as a tripwire. If a poisoned dependency, a malicious plugin, or a compromised local tool begins sweeping through files and environment variables, looking for credentials to exfiltrate and replay, a well-placed honeytoken can surface that behavior before the attacker gets very far. Validation, which triggers any honeytoken, is very often done automatically to reduce the noise for the attacker.

That early signal changes the response window. It can limit the blast radius. It can help incident responders identify the affected host, the likely access path, and the timing of the event. It can also create an auditable record of what happened and when, which is valuable during investigation and remediation.

For organizations dealing with supply chain attacks that target credentials first, that is not security theater. That is practical detection.

Placement matters more than enthusiasm

Honeytokens only work if they stay believable and private. Placement is critical.

A honeytoken should look like the kind of secret an attacker expects to find in the kind of place they are already searching. We can glean from Shai Hulud where attacks look for secrets. The best workstation honeytokens blend into the legitimate local context and live in the files and locations that the supply chain malware tends to inspect first, like any local `.env` files or paths like `~/.config/gh/config.yml` or `~/.aws/credentials.` Local config files, development directories, service-related settings, and environment variable paths are all obvious candidates. So are places where convenience has historically created risky habits.

Environment variables deserve special attention here. Developers often treat them as safer than files because they feel transient. In practice, they spread. They persist in shell history, child processes, debug output, terminal multiplexers, launch configs, and tool integrations. If it is in your environment, it is often more portable and more visible than people assume.

A private honeytoken placed in those realistic paths can do its job quietly while real secrets are being removed from the system.

Start with what an individual developer can do

One of the weaknesses in many workstation security conversations is that they blur the distinction between individual actions and organizational controls. That creates advice that sounds good but feels impossible to follow.

An individual developer cannot rewrite the package policy for the company, deploy endpoint tooling across the fleet, or migrate the entire organization to identity-based authentication alone. But they can make meaningful changes on their own machine today.

They can remove plaintext secrets from active project directories. They can stop using unapproved local storage for credentials. They can move secrets into the system keychain or approved managers. They can reduce reliance on long-lived environment variables.

Further, they can avoid random plugins, suspicious package installs, and unapproved agentic tooling. They can treat phishing, weird links, and convenience scripts with more skepticism. They can report strange package behavior instead of assuming the problem is isolated.

They can also install and maintain honeytokens as a tripwire while the rest of the cleanup continues.

Workstation security is partly organizational, but compromise often begins with local habits.

Then demand organizational support

Developers should not have to, and can't really solve this alone.

The enterprise has to do its part by making the secure path easier to follow. That means providing approved secret managers and clear local development patterns. It means publishing workstation baselines, endpoint protections, and package trust policies that actually reflect current threats. Establish cooldown periods for updates when appropriate, rather than normalizing instant adoption of whatever just dropped upstream. Sandbox or somehow isolate new code and unfamiliar tools before they are trusted with real access.

We must create and test response playbooks for when honeytokens fire, because a detection without a plan can still turn into a mess.

There is also a new policy line that many teams should draw clearly: do not install agentic systems on work machines without explicit approval. That is especially important when those tools can read local context, inspect repositories, access credentials, or run broad local actions. The attack surface is not only the model. It is the plugin ecosystem, the automation layer, the permissions, and the assumptions about trust.

Some teams may even benefit from local warnings or command aliases that remind users when they are about to invoke unapproved tooling. If trying to invoke `openclaw` instead sends off a warning or a honeytoken, even better.

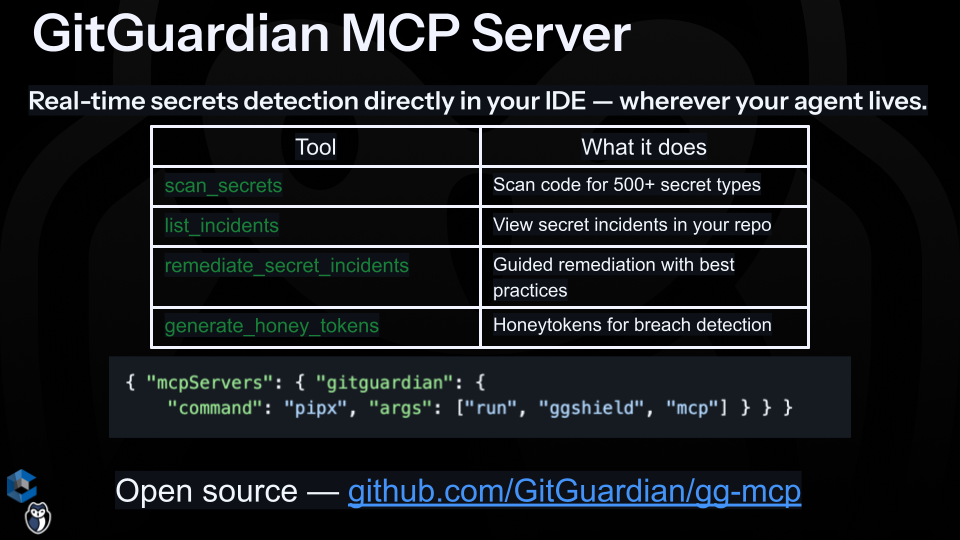

If the environment allows it, organizations can also add security-aware MCP and IAM tooling to local assistants to help with remediation workflows, policy checks, and honeytoken placement. That can make the defensive path more practical without pretending automation removes the need for judgment.

Secrets are the first target, not the only target

Admittedly, secrets are not the whole story of workstation risk.

Attackers may also want browser session material, package publishing rights, signed commit workflows, access to internal knowledge, SSH agent forwarding, build context, or any local state that helps them pivot. But secrets remain one of the most portable, reusable, and operationally useful prizes. That makes them the best first cleanup target and the best place to reduce attacker value quickly.

If an attacker lands on a developer machine and finds nothing useful in plaintext, the possible damage narrows. The incident may still be serious, but it becomes harder to convert local execution into durable enterprise access.

That is the security value of secret elimination. It does not promise perfection. It reduces attacker leverage.

Treat developer machines like they matter, because they do

The agentic AI era has amplified workstation risk.

Developers now work in environments that combine trusted execution, local automation, sprawling dependency chains, and high-value access in one place. Attackers know that they no longer need physical access to a laptop or a dramatic break-in story. Sometimes all they need is an update, an altered package or plugin, or a workflow that slips through trust assumptions and starts looking for credentials.

Developer workstations deserve the same discipline we already apply to pipelines and production infrastructure: Eliminate plaintext secrets, move toward stronger identity-based patterns, and be careful with updates, plugins, and local automation. And while all of that longer work is underway, install Honeytokens where attackers are most likely to look.

That is not the whole strategy. It is the first good move.

For teams trying to reduce risk now, that is often the difference between discovering an attack after the damage is done and catching it while it is still unfolding.