Last week we had a global overview of the most recent advances in security in the Software Development Life Cycle and maybe you started to appreciate how this cycle can be accelerated by embedding security on a multitude of projects and teams thanks to powerful tools at our disposal.

We are now going to see how this applies to maybe the most fundamental technology behind the DevOps cycle: the CI pipeline.

Then we will address security in deployments’ orchestration, and finally, we’ll get to maintenance and incident response.

3.2 - Continuous Integration Security

This might be neglected sometimes, but securities around the CI system are something to think about; and if not set up correctly, it could cause data leaks.

Like repository access or cloud access, the access to the CI system also needs to be controlled. If the whole company is sharing one same CI system, you probably don't want everybody to build everything, or even see everything. When teams and team members grow, manual user administration in the CI system is yet another toil that slows down your SDLC.

Luckily, there are ways to reduce this overhead. For example, you might be able to integrate your CI with your Git or company SSO for authentication. For authorization, you may rely on the groups' information provided by those SSO services. For another example, considering another CI built with this issue in mind would alleviate this issue, too. If you use GitHub Actions as your CI, you wouldn't need to worry about the permissions of the CI - it simply follows the repo permission.

Another issue regarding security on CI that needs to be addressed is secrets. More often than not, the CI isn't just going to run some unit tests and call it a day. You probably would need to retrieve some credentials to interact with some other components, like secrets, to push to a docker image registry, for example.

Modern CIs can store secrets on a project level. Once configured, you can use it directly in the build without putting the sensitive data in clear text format. Even if you try to print the variable containing sensitive data, it will not show up (things were not the case a few years ago in some CI tools).

Regarding where to store the values of the secrets, maybe in the old monolith time, since there is only one team, one repo, a few secrets, people might store it somewhere and let others know by messaging and stuff. Again, this wouldn't scale with several product teams with tens or even hundreds of pipelines, each having its own set of secrets. There are quite a few automated solutions for this.

For example, you can store your secrets directly in a Kubernetes cluster and use Kubernetes Credentials Provider for Jenkins. For another example, you can use the HashiCorp Vault Plugin for Jenkins to use secrets directly from a Jenkins pipeline.

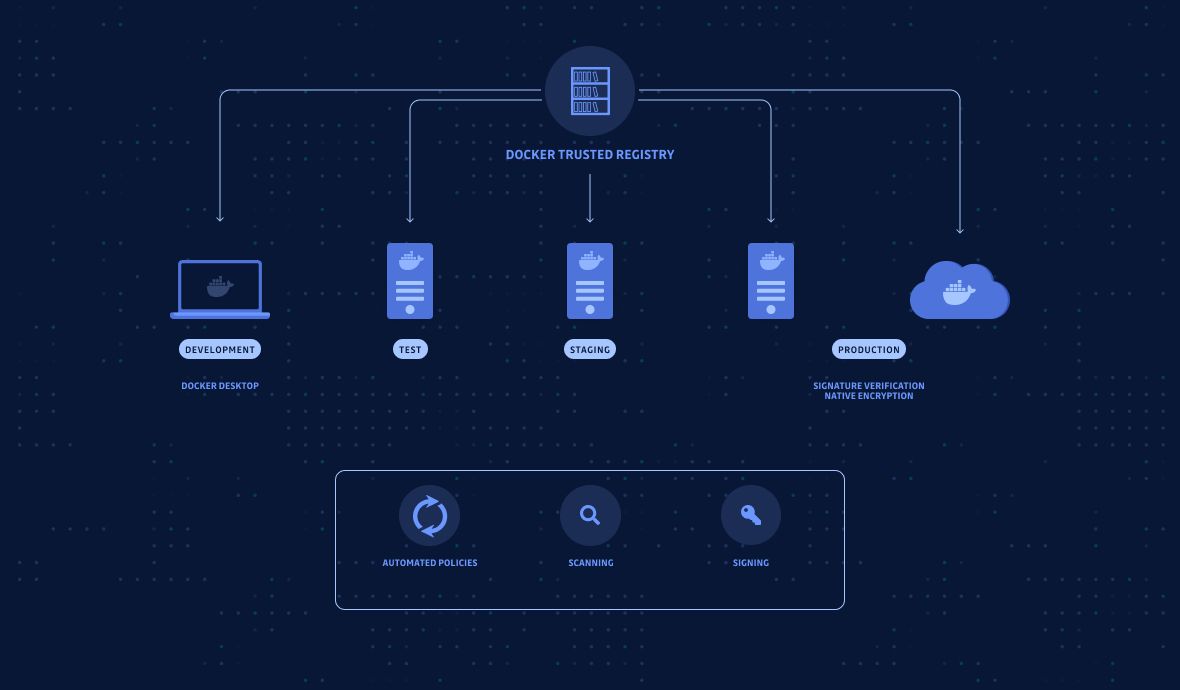

3.3 - Container Image Security

The modern infrastructure is said to be immutable because its building blocks are being continuously replaced rather than changed in-place. One of the pillars of this architecture is the container technology, most famously the one developed by Docker.

While containers have simplified the deployment, scaling, and failover by quite a significant margin, they bring challenges. If the image has some known vulnerabilities, your containers could be exploited, and the integrity of the whole machine or even the entire system is compromised. For container security, GitGuardian have an upcoming cheat sheet on best practices. Stay tuned...

An automated mechanism is most certainly needed to ensure the images we build don't contain vulnerabilities. By implementing such a mechanism, you can significantly reduce your operational overhead. No more managing machines (be it physical or virtual), no more configuration management, no more patching, everything automated, less toil - all means your SDLC is not only more secure but also faster.

There are quite a few tools out there doing this, some of which are CLI-based, which means they can easily be integrated with your CI pipelines before pushing the image to an image registry. For example, I personally quite like the Trivy tool developed by Aqua Security.

4 - Integration / Deploy

Back in the days of a monolith setup, when it came to deploying, people would typically log onto the machine, download the latest version, restart, then move on to the next machine (if it's an HA setup). Maybe this was done in an automated method with the help of some configuration management tools.

This method doesn't scale that well, especially under critical situations when you have limited response time, and you have the overhead of maintaining the state of all the resources you have. That's why VMs with autoscaling then Kubernetes (container orchestration) became more and more popular.

Complicated systems, especially Kubernetes, bring security challenges too, of which the biggest are secrets.

4.1 - Secrets in Kubernetes

In a traditional in-place deployment in physical or virtual machines, the secrets needed by the applications were most likely handled by the configuration management system.

For example, if you use Ansible for the job, you could encrypt the secrets with the Ansible vault and store them in the repository. Since the Ansible vault is encrypted, you have a safe place to store those secrets as a single source of truth.

With Kubernetes, things are trickier.

You can't define secrets in YAML files and store them in git repos. That's the worst thing you can do. You may use Kubernetes' secrets as the single source of truth, but there are secrets created in the first place by people who don't need access to Kubernetes.

This is where a secrets manager could help. A secrets manager acts as a single source of truth to store your sensitive data. Secret managers are usually much easier to use than, for example, manually editing YAML resources in Kubernetes. They have beautiful UI as well because humans are meant to interact with them (easier than kubectl).

There are quite a few ways to integrate and automate the secrets part.

For example, you can develop a code that calls the manager's API, fetch secrets, then store it as K8s secrets with the Kubernetes API. You can even use external secrets to keep the secrets directly in the secrets manager. With sidecar injectors, it can render secrets to a shared memory volume. And with CSI (Container Storage Interface), you could employ ephemeral volumes to store secrets. At last, you can even use secrets manager APIs to retrieve secrets directly via network requests within your app.

For more details on secrets manager, see these two articles below:

- An Introduction to Secret Manager

- Kubernetes Secrets Management Challenges and Integration with Secret Managers

5 - Maintenance

5.1 - Incident Response Playbook

Playbooks are a crucial component of DevOps / DevSecOps / security.

When there is only one product and one component to maintain, there are fewer team members, there is less type of errors (at least you don't have inter-component or inter-system issues); and thanks to fewer team members and limited scope of issues, learning and sharing within the team isn't such a big problem.

As the product evolves, you put more people into the team, create more teams, create more components, and even more systems, and the interactions between people and between systems become more and more complex.

I was once in a project where I was doing the on-call rotation. An incident happened in the middle of the night, but it was out of my control. We kept adding more people into the call, but it changed nothing because they didn't know. In the end, the sky had almost become bright before we hung up the call.

When an online incident happens, it's very likely that not everybody is on the same page. Not everyone knows exactly what to do. Not everyone knows where to get the required information and who to call in the middle of the night to fix this issue quickly. People all have good intentions, wanting to resolve the issue ASAP, but good intentions again fail us; what we need is a mechanism.

An incident response playbook wasn't invented out of the blue; instead, it's a set of generalized and summarized processes (after too many incidents) so that we can have a consistent way of handling issues for quicker response and resolving. Learning from every incident is also part of the playbook, whether it's a security issue or a new vulnerability.

The content can include everything from runbooks and checklists to templates, training exercises, security attack scenarios, and simulation drills. The goal is simple: having a set of policies, processes, and practices for quickly responding to and resolving unplanned outages, thus helping teams fix online issues quicker and reduce the size of the circle of impact.

Summary

Although DevSecOps is created by "adding" security into DevOps, as we can see, adding more, in this case, doesn't slow down the SDLC but instead accelerates it. , There are more topics I wanted to cover but due to the already lengthy article I couldn't (for example, container runtime security platform, Kubernetes pod security policy, etc.).

Hopefully, I will get on them later. If you like this article, please share and subscribe to the blog!

This article is a guest post. Views and opinions expressed in this publication are solely those of the author and do not reflect the official policy, position, or views of GitGuardian, The content is provided for informational purposes, GitGuardian assumes no responsibility for any errors, omissions, or outcomes resulting from the use of this information. Should you have any enquiry with regard to the content, please contact the author directly.