Docker containers have been an essential part of the developer's toolbox for several years now. They allow developers to build, distribute, and deploy their applications in a standardized way.

However, this widespread adoption brings about significant security challenges. With containers becoming a potential attack surface, it's imperative to adopt robust security measures.

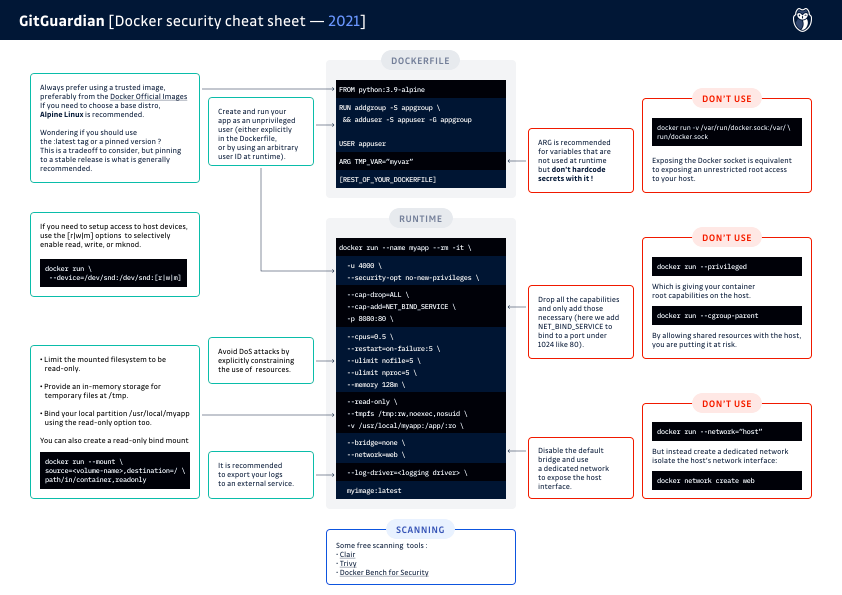

In this guide, we'll explore Docker Security Best Practices, ensuring your deployments are secure and resilient against threats. Plus, we've included a comprehensive cheat sheet to help you quickly implement these practices.

Download the Docker security cheatsheet

Understanding Docker Security Fundamentals

Docker secures applications by encapsulating them within containers, but this isolation isn't foolproof. Misconfigurations and misconceptions around container isolation can lead to vulnerabilities. It's crucial to understand that container security is built on the supporting infrastructure, software components, and runtime configurations.

The key here is that containers don’t have any security dimension by default. Their security completely depends on:

- the supporting infrastructure (OS and platform)

- their embedded software components

- their runtime configuration

Container security represents a broad topic, but the good news is that many best practices are low-hanging fruits one can harvest to quickly reduce the attack surface of their deployments.

Top Docker Security Best Practices

Image Security

Use Trusted Images

Ensure you're using images from trusted sources, like Docker Official Images. Alpine Linux is a recommended base due to its minimalistic nature.

Example:

docker pull python:3.9-alpineUnprivileged User

By default, the process inside a container is run as root (id=0). Run containers with a non-root user to limit access levels.

Example:

FROM base_image

RUN addgroup -S appgroup && adduser -S appuser -G appgroup

USER appuserUser ID Namespace

Segregate namespaces to prevent container privilege escalation from affecting the host.

By default, the Docker daemon uses the host's user ID namespace. As a result, any successful privilege escalation inside a container would also grant root access to both the host and other containers.

To mitigate this risk, configure your host and the Docker daemon to use a separate namespace with the --userns-remap option.

Container Runtime Security

Forbid New Privileges

Your container should never run as privileged, as this would grant it all root capabilities on the host machine.

For enhanced security, it is recommended to explicitly forbid the addition of new privileges after container creation using this option: --security-opt=no-new-privileges.

Define Fine-grained Capabilities

By default, your containers run with a set of enabled capabilities, many of which you likely don't need.

It's recommended to explicitly specify required Linux capabilities, dropping unnecessary ones.

For example, a web server would probably only need the NET_BIND_SERVICE capability to bind the port 80.

Control Group Utilization

Limit CPU and memory usage using cgroups to prevent a single container from overwhelming host resources.

Example:

docker run --memory="400m" --cpus="0.5" Control Groups are the mechanisms used to control access to CPU, memory, and disk I/O for each container. By default, a container is associated with a dedicated cgroup, but if the option --cgroup-parent is present, you are putting the host resources at risk of a DoS attack because you are allowing shared resources between the host and the container.

Host System Security

Sensitive Filesystem Parts

Avoid sharing sensitive parts of the host filesystem, such as root (/), device (/dev), process (/proc), and virtual (/sys) mount points.

If you need access to host devices, be careful to selectively enable the access options with the [r|w|m] flags (read, write, and use mknod).

Container Filesystem Access

Containers should have read-only access to the host filesystem, and sensitive data should be securely handled. Use a temporary filesystem for non-persistent data.

Example:

docker run --read-only --tmpfs /tmp:rw ,noexec,nosuid Persistent Storage

If you need to share data with the host filesystem or other containers, you have two options:

- Create a bind mount with limited useable disk space (

--mount type=bind,o=size) - Create a bind volume for a dedicated partition (

--mount type=volume)

In either case, if the shared data doesn’t need to be modified by the container, use the read-only option.

Network Security

Docker Daemon Socket

The UNIX socket used by the Docker should not be exposed: /var/run/docker.sock

Giving access to it is equivalent to giving unrestricted root access to your host.

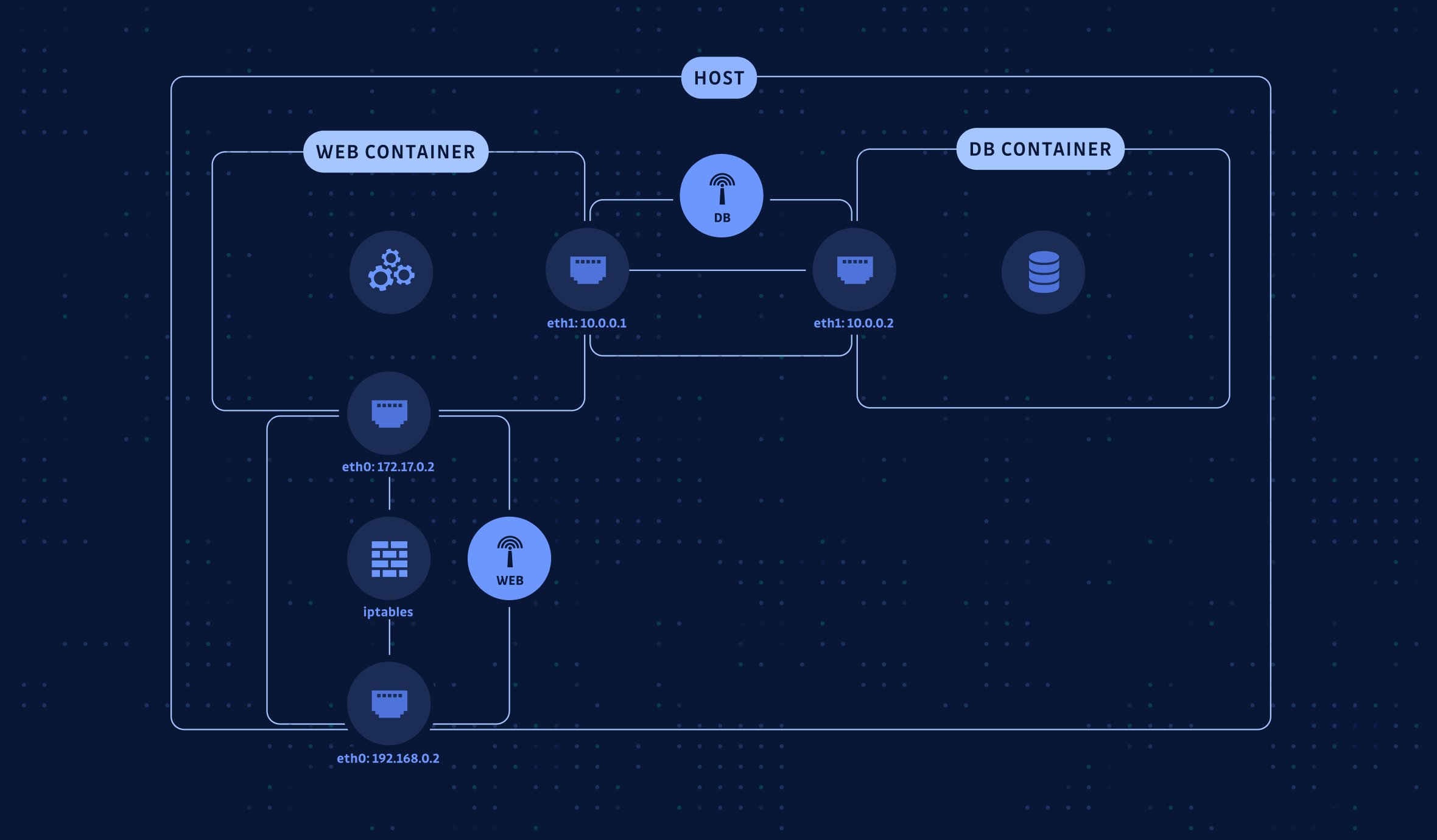

Custom Network Bridges

Avoid Docker's default docker0 network bridge; create custom networks for container isolation.

When a container is created, Docker connects it to the docker0 network by default. Therefore, all containers are connected to docker0 and are able to communicate with each other. Example:

docker network create custom_network

docker run --network=custom_network Here is a simple example: you have a container hosting a web server that needs to connect to a database running in another container.

The secure way to connect these two containers involves creating two bridge networks:

- A

WEBbridge network to route incoming traffic from the host network interface. - A

DBbridge network is used exclusively to connect the database and web containers, following network isolation best practices.This approach ensures a more secure and isolated communication between the containers.

Docker Security Scanning and Vulnerability Management

Implementing comprehensive vulnerability scanning is crucial for maintaining secure Docker container security best practices. Container images often contain vulnerabilities in their base layers, dependencies, and application code that can be exploited if left unaddressed.

Establish a multi-layered scanning approach that includes:

- Base Image Scanning: Regularly scan base images for known CVEs using tools like Trivy, Snyk, or Clair. Schedule automated scans to detect newly disclosed vulnerabilities in your image registry.

- Dependency Analysis: Examine application dependencies and libraries within your containers. Many vulnerabilities originate from outdated or compromised third-party packages that developers unknowingly include.

- Runtime Monitoring: Deploy runtime security tools that can detect anomalous behavior and potential exploits in running containers. This provides defense-in-depth beyond static image analysis.

- Continuous Integration: Integrate vulnerability scanning into your CI/CD pipeline to catch security issues before deployment. Set security gates that prevent vulnerable images from reaching production environments.

Example implementation:

# Scan image before deployment

trivy image myapp:latest --severity HIGH,CRITICAL

# Fail build if critical vulnerabilities found

trivy image myapp:latest --exit-code 1 --severity CRITICALRegular vulnerability assessments ensure your Docker image security best practices remain effective against evolving threats.

Implementing Docker Security Tools

Numerous tools can aid in securing Docker environments:

- Vulnerability Scanners like Trivy or Snyk.

- Secret Scanning Tools like ggshield to detect hard-coded secrets.

Docker Secrets Management and Configuration Security

Proper secrets management represents one of the most critical Docker container security best practices, as exposed credentials can lead to complete system compromise. Traditional approaches like embedding secrets in environment variables or configuration files create significant security risks.

- Docker Secrets Service: Utilize Docker's built-in secrets management for sensitive data like API keys, passwords, and certificates. Secrets are encrypted at rest and in transit, mounted as in-memory filesystems within containers.

# Create and use Docker secret

echo "my_secret_password" | docker secret create db_password -

docker service create --secret db_password myapp:latest- External Secret Management: Integrate with enterprise secret management solutions like HashiCorp Vault, AWS Secrets Manager, or Azure Key Vault. These provide centralized secret rotation, audit trails, and fine-grained access controls.

- Environment Variable Security: When environment variables are necessary, use init containers or sidecar patterns to fetch secrets at runtime rather than embedding them in images. Never include secrets in Dockerfiles or commit them to version control.

- Secret Scanning: Implement automated secret detection tools like GitGuardian's ggshield to scan container images and source code for accidentally exposed credentials. This prevents secrets from entering your container supply chain.

Effective secrets management ensures sensitive data remains protected throughout the container lifecycle while maintaining operational efficiency.

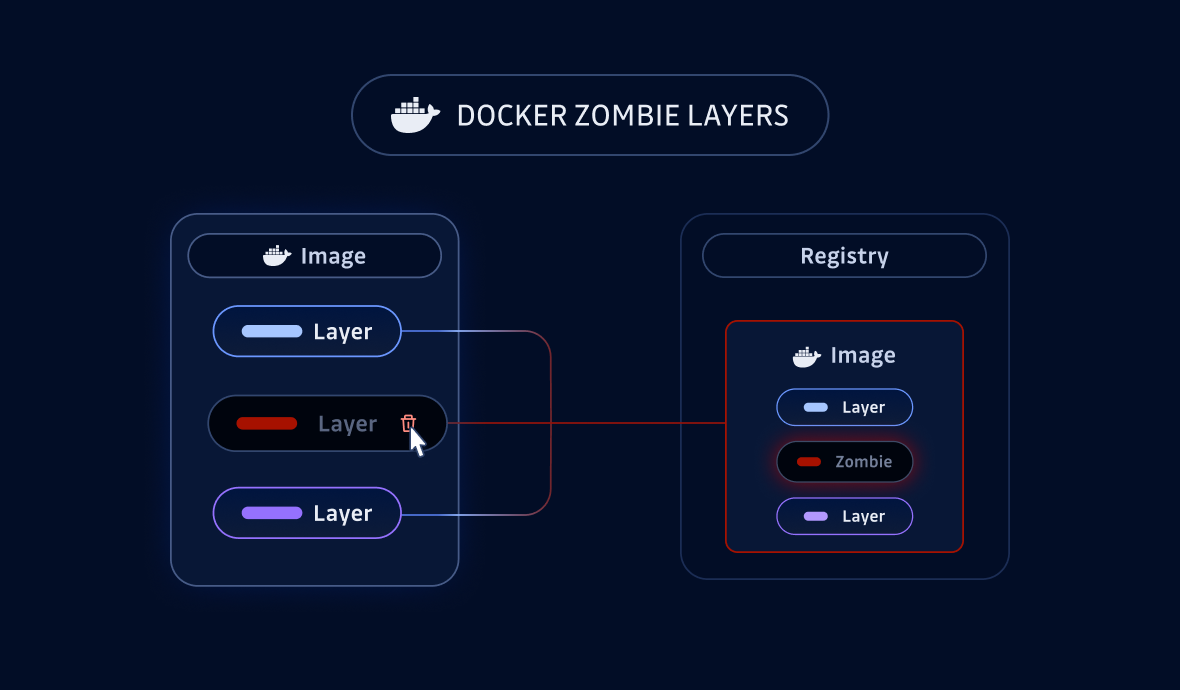

Docker Registry Security and Supply Chain Protection

Securing your container registry infrastructure is essential for comprehensive Docker security best practices, as compromised registries can distribute malicious images across your entire infrastructure. Registry security encompasses access controls, image integrity, and supply chain verification.

- Registry Access Controls: Implement role-based access control (RBAC) with least-privilege principles. Use strong authentication mechanisms like multi-factor authentication and service accounts with limited scopes for automated systems.

- Image Signing and Verification: Deploy Docker Content Trust or Notary to cryptographically sign images and verify their integrity. This ensures images haven't been tampered with during storage or transmission.

# Enable content trust

export DOCKER_CONTENT_TRUST=1

docker push myregistry.com/myapp:latest- Registry Scanning: Configure your registry to automatically scan pushed images for vulnerabilities and malware. Implement admission controllers that prevent deployment of images failing security policies.

- Supply Chain Monitoring: Track image provenance and maintain software bills of materials (SBOMs) for all container components. Monitor for suspicious activities like unauthorized image modifications or unusual access patterns.

- Network Security: Secure registry communications using TLS encryption and restrict network access through firewalls or VPNs. Consider using private registries for sensitive applications rather than public repositories.

Robust registry security practices protect against supply chain attacks and ensure only verified, secure images reach your production environments.

Common Docker Security Pitfalls and How to Avoid Them

- Using Unverified Images: Always opt for official or verified resource images.

- Over-exposed Ports and Sockets: Validate the necessity of port exposures and avoid unnecessary permissions.

- Environment Variable Mismanagement: Secure sensitive information using Docker secrets or environment variables securely.

Unfortunately, public Docker images often leak secrets. Did you know that 8.5% of Docker images expose API and private keys?

It is evident that scanning images for hidden secrets, whether they are self-created or supplied by third parties, has become crucial in protecting against supply chain attacks.

- Scan Dockerfile, build args and Docker image layers’ filesystem

- Integrate with your CI/CD pipelines

- Find 450+ types of secrets and sensitive files

FAQs

What are the most critical Docker security best practices for enterprise environments?

Key Docker security best practices include using trusted base images, running containers as non-root users, enforcing least privilege with capabilities, implementing vulnerability scanning in CI/CD pipelines, and securing secrets with dedicated management solutions. These measures significantly reduce attack surface and help maintain compliance in regulated or large-scale environments.

How should secrets be managed within Docker containers to prevent leaks?

Secrets should never be embedded in images or environment variables. Use Docker’s secrets management for encrypted, in-memory secret delivery, or integrate with external vaults like HashiCorp Vault or AWS Secrets Manager. Automated secret scanning tools such as GitGuardian ggshield should be used to detect accidental exposures during development and deployment.

How can vulnerability scanning be integrated into a Docker CI/CD pipeline?

Integrate image scanners like Trivy or Snyk into your CI/CD pipeline to automatically scan images for known vulnerabilities before deployment. Configure security gates to block builds with critical CVEs, and schedule regular scans of your image registry to catch newly disclosed vulnerabilities in existing images.

What steps can be taken to secure Docker registries and prevent supply chain attacks?

Secure registries by enforcing RBAC, enabling multi-factor authentication, and using signed images with Docker Content Trust. Implement automated scanning of pushed images, restrict network access, and maintain SBOMs for all images. Monitor for unauthorized modifications and use private registries for sensitive workloads.

Why is running Docker containers as non-root users recommended?

Running containers as non-root users limits the impact of a container compromise. By default, containers run as root, which can lead to privilege escalation on the host if exploited. Defining a non-root user in your Dockerfile and using user namespace remapping significantly reduces this risk.

How does network segmentation improve Docker container security?

Network segmentation using custom Docker networks isolates containers, reducing lateral movement in the event of a breach. Avoid the default docker0 bridge and create dedicated networks for different application tiers (e.g., web and database), ensuring only necessary communication paths are allowed.

What are common pitfalls to avoid when implementing Docker security best practices?

Common pitfalls include using unverified images, exposing sensitive ports or the Docker socket, mismanaging environment variables, and neglecting to scan for secrets or vulnerabilities. Regularly review configurations, automate security checks, and enforce least privilege to mitigate these risks.

Before you go

Enhance your container security strategy by trying out tools like ggshield for seamless secret detection. Join 300,000+ developers, ensuring their secrets are well-protected.

Download the full Docker Security Cheat Sheet and give your containerization strategy a fortified edge.

Ensure your Docker environments are robust, secure, and prepared to handle the shifting threat landscape. Let's build secure applications together!

Consider exploring related posts for deep dives:

![How To Use ggshield To Avoid Hardcoded Secrets [cheat sheet included]](https://storage.ghost.io/c/42/5d/425d266f-cf99-406e-9436-597a19bed011/content/images/size/w600/2022/10/22W44-blog-SecuringYourSecretsWithGGshield-Cheatsheet.jpg)

![GitHub Actions Security Best Practices [cheat sheet included]](https://storage.ghost.io/c/42/5d/425d266f-cf99-406e-9436-597a19bed011/content/images/size/w600/2022/05/22W18-blog-GitHubActionsSecurityCheatSheet.png)