In the first two parts of this tutorial, we discussed:

- How to enhance your Pod security in your Kubernetes cluster

- How to harden your Kubernetes network security

As the third and final part of this tutorial we are going over the authentication, authorization, logging, and auditing of a Kubernetes cluster. Specifically, we will demonstrate some of the best practices in AWS EKS. After reading this tutorial, you will be able to:

- create an AWS EKS cluster with Infrastructure as Code (IaC) using Terraform

- understand the best practices of creating an EKS cluster

- get a deeper understanding of service accounts

- do an IAM based user authentication

- audit cluster access

If you are using another public cloud service provider, the terminology might differ, but the principles still apply. Without further ado, let's get right into it.

Creating a cluster using Infrastructure as Code with Terraform

We have created two Terraform modules that create the

- networking parts: VPC, subnets, internet gateway, NAT gateway, route tables, etc.

- Kubernetes cluster

If you want to give it a try yourself, simply run:

git clone https://github.com/IronCore864/k8s-security-demo.git

git fetch origin pull/12/head

git checkout -b aws_eks FETCH_HEAD

cd k8s-security-demo

# edit the config.tf and update the AWS region accordingly

# configure your aws_access_key_id and aws_secret_access_key

terraform init

terraform apply

To view the code more easily, read the pull request here.

AWS EKS Kubernetes Clusters Best Practices

Use a Dedicated IAM Role for Cluster creation

The IAM user or role used to create the cluster is automatically granted system:masters permissions in the cluster's RBAC configuration:

$ kubectl get clusterrolebinding cluster-admin -o yaml

# ...

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: Group

name: system:masters

# ...

$ kubectl get clusterrole cluster-admin -o yaml

# ...

rules:

- apiGroups:

- '*'

resources:

- '*'

verbs:

- '*'

- nonResourceURLs:

- '*'

verbs:

- '*'

Basically, this means, the user or role used to create the cluster (the role to which the AWS access key you used to run the Terraform scripts) will be a cluster-admin, who can do anything.

Note: This IAM entity doesn't appear in any visible configuration, so make sure to keep track of which IAM entity originally created the cluster.

Therefore, it is a good idea to create the cluster with a dedicated IAM role and regularly audit who can use this role.

If you use this role to perform some routine Kubernetes maintenance tasks on the cluster, it can be quite dangerous, since this role can do anything.

As a best practice, use a dedicated role to run your Terraform scripts and nothing else For example, you should create an IAM role dedicated for your CI systems that runs the Terraform scripts; but make sure this role will only be used by your CI system and nobody else.

By the way, I highly recommend you check my AWS IAM security best practices here:

To access the cluster using another user or role, see section 4 of this tutorial.

Enable Audit Logging

Kubernetes auditing provides a security-relevant, chronological set of records documenting the sequence of actions in a cluster. The cluster audits the activities generated by users, applications that use the Kubernetes API, and the control plane itself.

AWS EKS control plane logging provides audit and diagnostic logs, which can be directly loaded into AWS CloudWatch Logs. These logs make it easy for you to secure and run your clusters. Enabling these logs is optional, but you should definitely do it. You can refine the exact log types you need.

As a reminder, the following cluster control plane log types are available:

- Kubernetes API server component logs (API) – Your cluster's API server is the control plane component that exposes the Kubernetes API.

- Audit (audit) – Kubernetes audit logs provide a record of the individual users, administrators, or system components that have affected your cluster.

- Authenticator (authenticator) – Authenticator logs are unique to Amazon EKS. These logs represent the control plane component that Amazon EKS uses for Kubernetes Role-Based Access Control (RBAC) authentication using IAM credentials.

- Controller manager (controllerManager) – The controller manager manages the core control loops that are shipped with Kubernetes.

- Scheduler (scheduler) – The scheduler component manages when and where to run pods in your cluster.

When we use the Terraform resource aws_eks_cluster to create an AWS EKS cluster (see here), by default, none of those log types are enabled. To enable the logging, we need to pass the right values to the parameter enabled_cluster_log_types (here).

#terraform/modules/eks/cluster.tf

resource "aws_eks_cluster" "cluster" {

depends_on = [

aws_iam_role_policy_attachment.AmazonEKSClusterPolicy,

aws_iam_role_policy_attachment.AmazonEKSServicePolicy,

]

version = var.k8s_version

name = var.cluster_name

role_arn = aws_iam_role.eks_role.arn

vpc_config {

subnet_ids = var.worker_subnet_ids

security_group_ids = [aws_security_group.cluster.id]

}

enabled_cluster_log_types = ["api", "audit", "authenticator", "controllerManager", "scheduler"]

}

The enabled_cluster_log_types parameter (optional) describes a list of the desired control plane logging to enable, and the most important one being the "audit" log.

Use Private EKS Cluster Endpoint Whenever Possible

By default, when you provision an EKS cluster, the API cluster endpoint is set to public, i.e. it can be accessed from the Internet. For the purpose of testing, the EKS cluster created using the Terraform modules provided in the GitHub link is also using a public endpoint.

However, if you are running a production-grade Kubernetes cluster in a company, it's safer to set the endpoint as "private", and private only. In this case:

- All traffic to your cluster API server must come from within your cluster's VPC or a connected network (for example, you use a VPN, a jump host/bastion host, transit gateway, direct connect, etc, to access the cloud resources)

- There is no public access to your API server from the internet. Any

kubectlcommands must come from within the VPC or a connected network.

To disable the public endpoint and use the private endpoint only, add a vpc_config section in the resource "aws_eks_cluster":

vpc_config {

endpoint_private_access = true

endpoint_public_access = false

}

While this requires extra setup (so that you are in a connected network and can access the API endpoint), it also drastically reduces the attack surface.

If restricting to private endpoint isn't really an option for you, don't worry too much, because even if you are using the public endpoint, the traffic is still encrypted, and you are still required to authenticate. We will, however, demonstrate possible dangerous situations in the next section.

Deep Dive into Service Accounts

Kubernetes has two types of users: service accounts, and normal user accounts. Service accounts handle API requests on behalf of Pods, and the authentication is typically managed automatically by K8s using tokens.

Now, let's poke around and see what we can do:

# the pod name for you might differ

$ kubectl get pod aws-node-pdh5b -o yaml | grep -i account

- mountPath: /var/run/secrets/kubernetes.io/serviceaccount

- mountPath: /var/run/secrets/kubernetes.io/serviceaccount

serviceAccount: aws-node

serviceAccountName: aws-node

- serviceAccountToken:

- mountPath: /var/run/secrets/kubernetes.io/serviceaccount

$ kubectl describe sa aws-node | grep secrets

Image pull secrets: <none>

Mountable secrets: aws-node-token-lw6z4

$ kubectl get secret aws-node-token-lw6z4

NAME TYPE DATA AGE

aws-node-token-lw6z4 kubernetes.io/service-account-token 3 3d14h

$ kubectl exec --stdin --tty aws-node-pdh5b -- /bin/bash

bash-4.2 $ ls /var/run/secrets/kubernetes.io/serviceaccount

ca.crt namespace token

In this quick demo above, we have seen that:

- The service account uses a secret, which stores the

ca.crt, namespace, and token. If the Kubernetes user has permission to read this secret, he can get these contents. - The service account is mounted as some files under the path

/var/run/secrets/kubernetes.io/serviceaccount. Even if the Kubernetes user doesn't have the permission to read a Kubernetes secret, but is able to SSH into the pod, he can also read the content of the secrets.

A service account token is a long-lived, static credential. If it is compromised, lost, or stolen, an attacker may be able to perform all the actions associated with that token until the service account is deleted.

So, accessing Pod Secrets and executing commands in a pod should be restricted and should only be granted on a need-to-know basis (i.e. least privileged principle, read about Pod Security Policies in the first tutorial). If the serviceaccount information is leaked, it can be used from outside the cluster. Let's demonstrate that:

# Point to the API server; this is only an example

APISERVER="https://8885DF557F2AC3947D28381DBF5B7670.gr7.ap-southeast-1.eks.amazonaws.com"

# serviceaccount path in the pod: /var/run/secrets/kubernetes.io/serviceaccount

NAMESPACE="NAMESPACE_FROM_THE_SERVICEACCOUNT_PATH_HERE"

TOKEN="TOKEN_FROM_THE_SERVICEACCOUNT_PATH_HERE"

# copy the ca.crt to a local file

CACERT=ca.crt

# Explore the API with TOKEN

$ curl --cacert ${CACERT} --header "Authorization: Bearer ${TOKEN}" -X GET ${APISERVER}/api

{

"kind": "APIVersions",

"versions": [

"v1"

],

"serverAddressByClientCIDRs": [

{

"clientCIDR": "0.0.0.0/0",

"serverAddress": "ip-172-16-56-239.ap-southeast-1.compute.internal:443"

}

]

}

As we can see above, as long as we have the information that is stored in the serviceaccount, we can actually get access to the cluster anytime we want.

If you really need to grant access to K8s API from outside the cluster, like from an EC2 instance (for example, running your CI/CD pipelines), or from an end user's laptop, it’s better to use some other method and map it to a K8s RBAC role.

Let's see how to do this in AWS EKS.

User Authentication

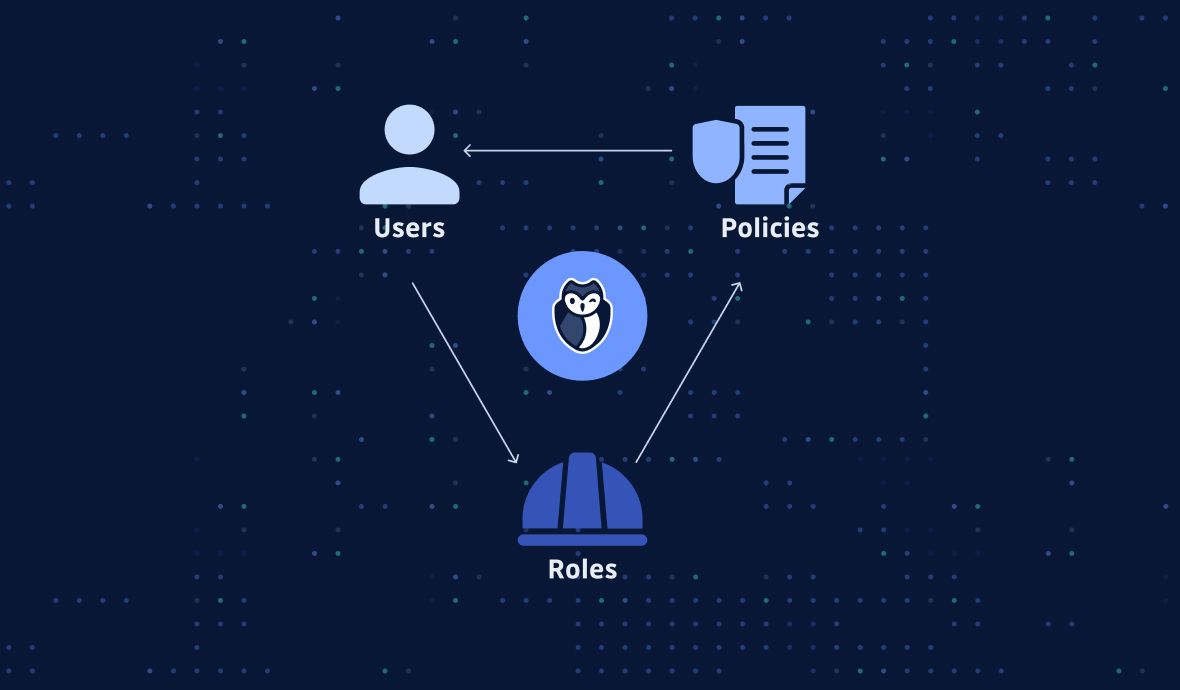

To grant additional AWS users or roles the ability to interact with your cluster, you must edit the aws-auth ConfigMap within Kubernetes and create a Kubernetes rolebinding or clusterrolebinding with the name of a group that you specify in the aws-auth ConfigMap.

Example:

apiVersion: v1

kind: ConfigMap

metadata:

name: aws-auth

namespace: kube-system

data:

mapRoles: |

- rolearn: arn:aws:iam::111122223333:role/eksctl-my-cluster-nodegroup-standard-wo-NodeInstanceRole-1WP3NUE3O6UCF

username: system:node:{{EC2PrivateDNSName}}

groups:

- system:bootstrappers

- system:nodes

mapUsers: |

- userarn: arn:aws:iam::111122223333:user/admin

username: admin

groups:

- system:masters

- userarn: arn:aws:iam::444455556666:user/ops-user

username: ops-user

groups:

- eks-console-dashboard-full-access-group

If you apply the aws-auth ConfigMap above:

- IAM user with ARN

arn:aws:iam::111122223333:user/adminwill be mapped tosystem:mastersgroup - IAM user with ARN

arn:aws:iam::444455556666:user/ops-userwill be mapped toeks-console-dashboard-full-access-group.

Then you can use Kubernetes RBAC to bind the group to a Role/ClusterRole. For more details on Kubernetes RBAC, see the official documentation here.

It's worth noting that when multiple users need identical access to the cluster, rather than creating an entry for each individual IAM User, you should allow those users to assume an IAM Role, and map that role to a Kubernetes RBAC group in the mapRoles section of the ConfigMap. This keeps the aws-auth ConfigMap short, simple, easy to read/manage. This will be easier to maintain, especially as the number of users that require access grows.

While IAM is AWS's preferred way to authenticate users who need access to an AWS EKS cluster, it is not the only way! It's possible to use an OIDC identity provider such as GitHub using an authentication proxy and Kubernetes impersonation. Due to the length of this article, we will not cover how to do this here; but please do keep in mind that IAM isn't your only choice.

Cluster Access Auditing

It's clear that auditing Kubernetes access is crucial for cluster security. It's more crucial to know that who requires access is likely to change over time, thus auditing regularly is the best practice: to see who has been granted access, the rights they’ve been assigned, and whether they still need the access.

Here, we introduce two small tools for an easier audit:

kubectl-who-can

kubectl-who-can is a small tool that shows which subjects have RBAC permissions to VERB [TYPE | TYPE/NAME | NONRESOURCEURL] in Kubernetes.

It's an open-source project by Aqua Security and you might have already known them because of their other project trivy which is a scanner for vulnerabilities in container images, file systems, and Git repositories, as well as for configuration issues.

The easiest way to install kubectl-who-can is by Krew, which is the plugin manager for kubectl CLI tool. Assuming you have already installed krew, you can simply run:

kubectl krew install who-can

For example, if you run:

tiexin@Tiexins-Mac-mini ~ $ kubectl who-can get secrets

ROLEBINDING NAMESPACE SUBJECT TYPE SA-NAMESPACE

system:controller:bootstrap-signer kube-system bootstrap-signer ServiceAccount kube-system

system:controller:token-cleaner kube-system token-cleaner ServiceAccount kube-system

CLUSTERROLEBINDING SUBJECT TYPE SA-NAMESPACE

cluster-admin system:masters Group

system:controller:expand-controller expand-controller ServiceAccount kube-system

system:controller:generic-garbage-collector generic-garbage-collector ServiceAccount kube-system

system:controller:namespace-controller namespace-controller ServiceAccount kube-system

system:controller:persistent-volume-binder persistent-volume-binder ServiceAccount kube-system

system:kube-controller-manager system:kube-controller-manager User

You can easily get all the users/group/serviceaccounts that can get secrets.

rbac-lookup

RBAC Lookup is a CLI that allows you to easily find Kubernetes roles and cluster roles bound to any user, service account, or group name. It helps to provide visibility into Kubernetes auth.

For Mac users, the easiest way to install it is by "brew":

brew install FairwindsOps/tap/rbac-lookup

For the simplest use case, rbac-lookup returns any matching user, service account, or group along with the roles it has been given:

$ rbac-lookup aws-node

SUBJECT SCOPE ROLE

kube-system:aws-node cluster-wide ClusterRole/aws-node

Summary

As the 3rd and last part of the Kubernetes hardening tutorial, this blog also closes the detailed introduction and hands-on guide on NSA/CISA's Kubernetes Hardening Guidance.

For additional security hardening guidance, here are some useful links to give it a deeper dive:

- Center for Internet Security

Kubernetes benchmarks - Docker Security Technical Implementation Guides

- Kubernetes Security Technical Implementation Guides

- Cybersecurity and Infrastructure Security Agency (CISA)

analysis report - Kubernetes documentation.

- Container Security book by Liz Rice

This article is a guest post. Views and opinions expressed in this publication are solely those of the author and do not reflect the official policy, position, or views of GitGuardian, The content is provided for informational purposes, GitGuardian assumes no responsibility for any errors, omissions, or outcomes resulting from the use of this information. Should you have any enquiry with regard to the content, please contact the author directly